05.07.2026

Agaruda Cinta emerges at the frontier of Nvidia Omniverse DSX’s vision for the AI factory, joining it in shaping the next stage of the future

Industry analysis ・ Platform strategy | May 2026

When NVIDIA unveiled the Vera Rubin DSX and Omniverse DSX Blueprint in March 2026, the industry saw one thing clearly: the rules of competition for AI infrastructure had changed. It is no longer about "who can build a bigger factory," but about "who can compute the fate of every watt of electricity before breaking ground."

But hidden inside this ambitious blueprint is a square that hasn't been filled in. To see it, you first have to understand what DSX rewrote — and what it didn't.

Part I: The three rules DSX rewrote

Rule 1: The KPI shifted from "saving power" to "how much money each watt prints"

For the past two decades, the core KPI of data centers was PUE (Power Usage Effectiveness) — a cost-control metric through and through. But an AI factory is not a passive cost center for storing data; it is a production line that takes in electricity and outputs monetizable tokens. A new KPI emerged: Tokens-per-Watt.

This shift exposed an underestimated number: at gigawatt scale, as much as 40% of electricity evaporates before it ever reaches the chip — lost to inefficient cooling, distribution losses, and conservative over-provisioning. Translated into business language: if a 1 GW factory has potential annual revenue of $200 billion, every 1% of efficiency lost equals $2 billion vanishing into thin air.

Rule 2: From "build-while-you-fix" to "simulate first, then deploy perfectly"

The new generation of Vera Rubin and GB300 systems pushes rack power density to 130–150 kW, with some custom designs exceeding 200 kW. At this density, the traditional "build-while-you-fix" engineering workflow will simply bankrupt you — every month of delayed deployment costs anywhere from $100M to $200M in lost revenue.

The core logic DSX introduces is "shift-left validation": pushing problem discovery from the expensive construction site forward into the cheap software simulation stage. This is the first time software engineering's dev/staging testing practices have been fully transplanted into heavy industry.

Rule 3: From grid vampire to grid partner

Over 200 GW of AI projects are currently waiting in the U.S. grid interconnection queue. In an era of grid scarcity, "playing nice with the grid" has become almost the sole determinant of who gets to build and who has to wait. DSX's Flex / Boost / Exchange modules allow AI factories, for the first time, to dynamically coordinate with the grid in real time.

Part II: But there's still a blank space on the blueprint

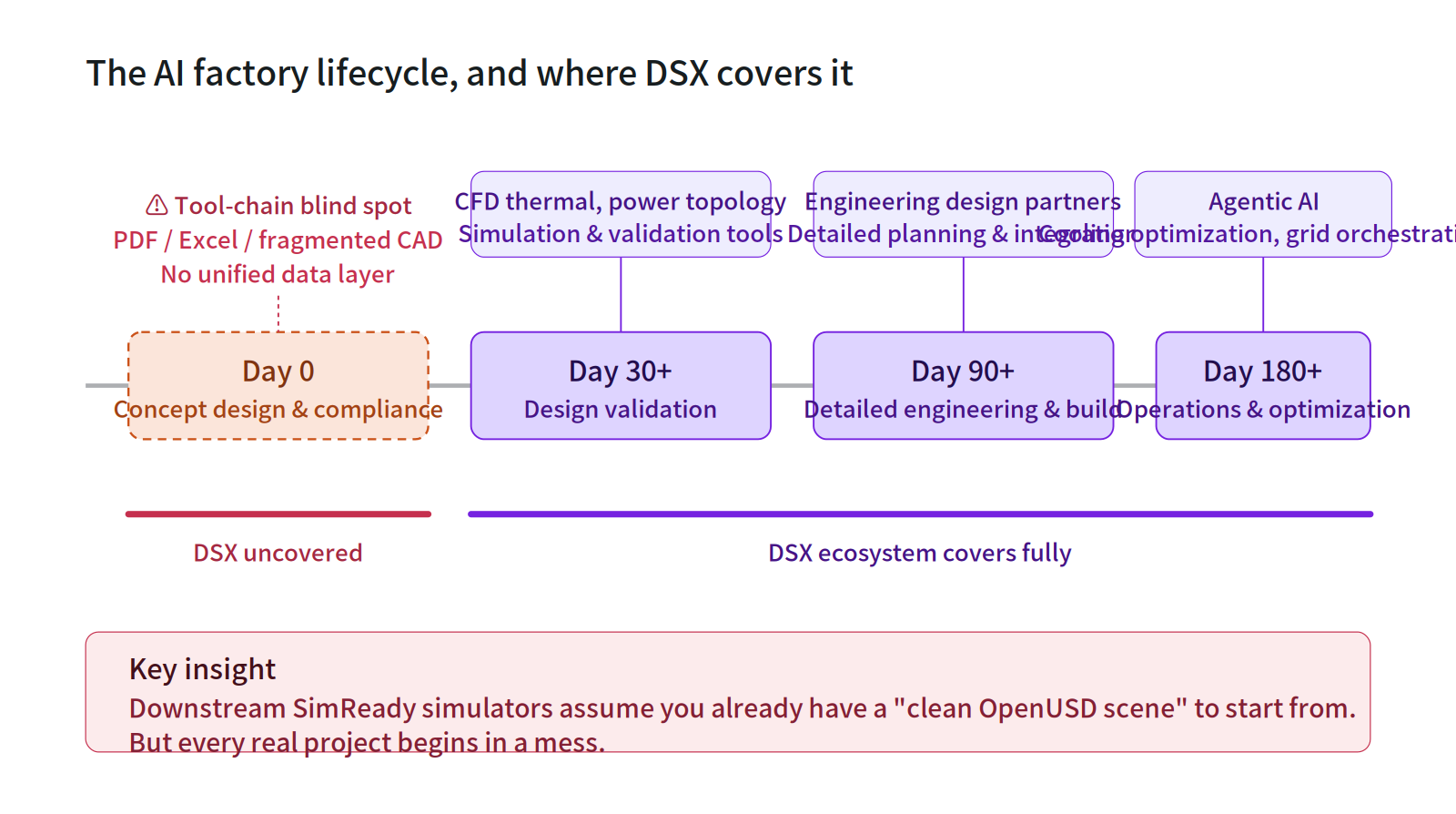

DSX paints a complete picture of life after Day 30: CFD thermal simulation, power topology validation, detailed engineering, operational cooling optimization, grid orchestration — every functional domain has its tools and partners lined up.

But Day 0 — the period before the blueprint even exists — is exactly where DSX falls silent.

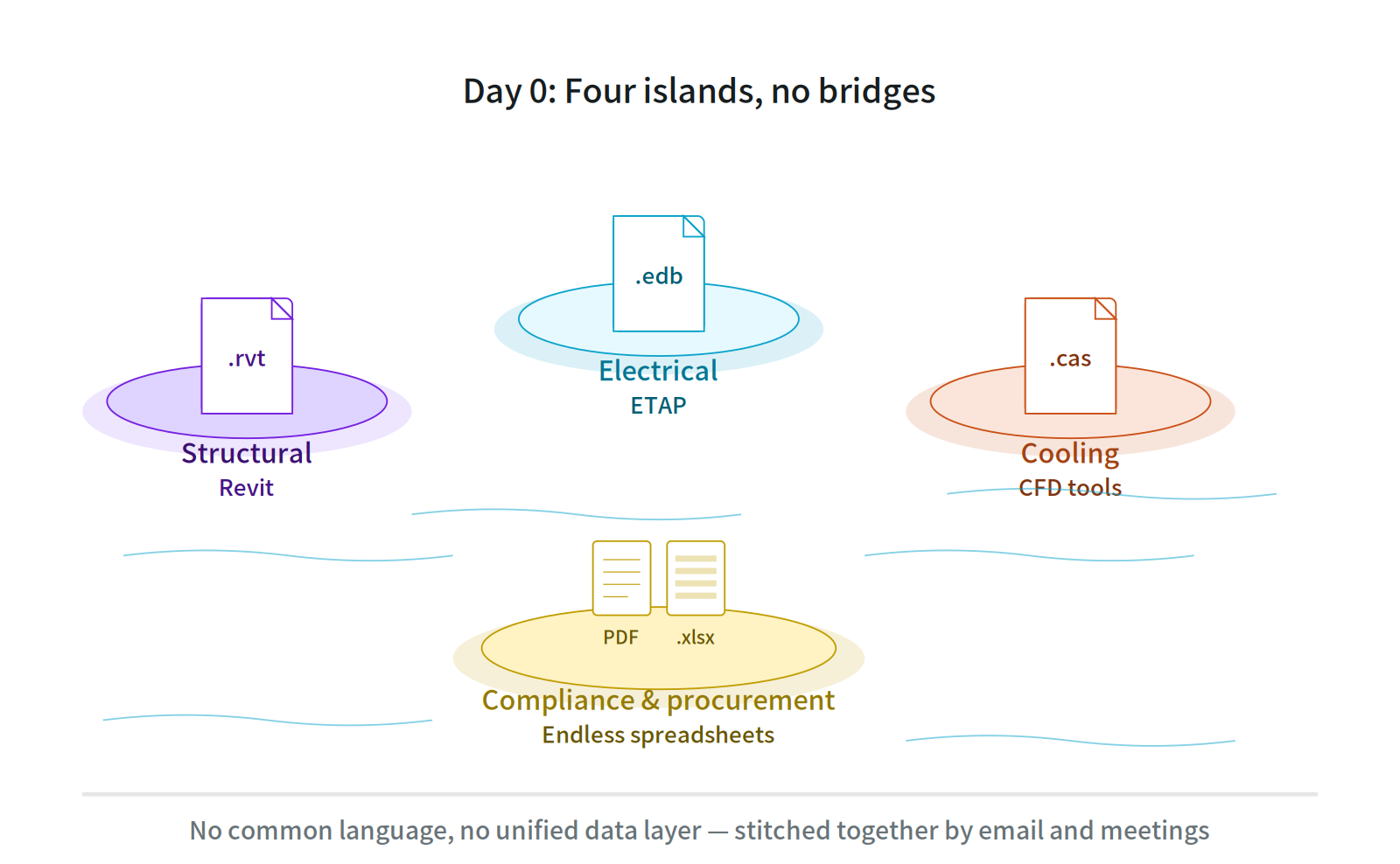

Figure 1: The AI factory lifecycle: DSX covers everything after Day 30 — but Day 0 is the upstream blind spot.

The silence isn't an oversight. The Day 0 problem genuinely doesn't fit a simulation tool. Day 0 looks like this:

- An owner hands you a PDF spec sheet, a few JPGs of the room, and an Excel BOM

- The structural engineer is in Revit (.rvt), the electrical engineer in ETAP (.edb), the cooling team in standalone CFD software (.cas)

- The compliance team is buried under endless regulatory checklists and procurement specs in PDF

- A globally distributed team — Taiwanese hardware vendor, U.S. compliance consultant, Japanese owner — barely aligned through email and meetings

Figure 2: Day 0 reality : Four file formats floating on isolated islands, no bridges between them. No common language, no unified data layer — stitched together by email and meetings.

⚠️ This is the upstream blind spot of the DSX tool chain. Until those scattered assets are unified into a coherent digital twin, even the most powerful downstream SimReady simulator has nothing to work with.

In other words, DSX assumes you already have a clean, structured, OpenUSD-compliant digital twin scene ready to simulate. But in reality, no one starts from there. The real starting point of every AI factory project is chaos.

This blind spot matters because it isn't a "small problem." It defines the performance ceiling of every downstream tool: feed an accurate CFD simulation an incorrect BOM and you get incorrect thermal planning; run the most advanced compliance AI on an inconsistent equipment list and what you get back is a professionally formatted but wrong answer.

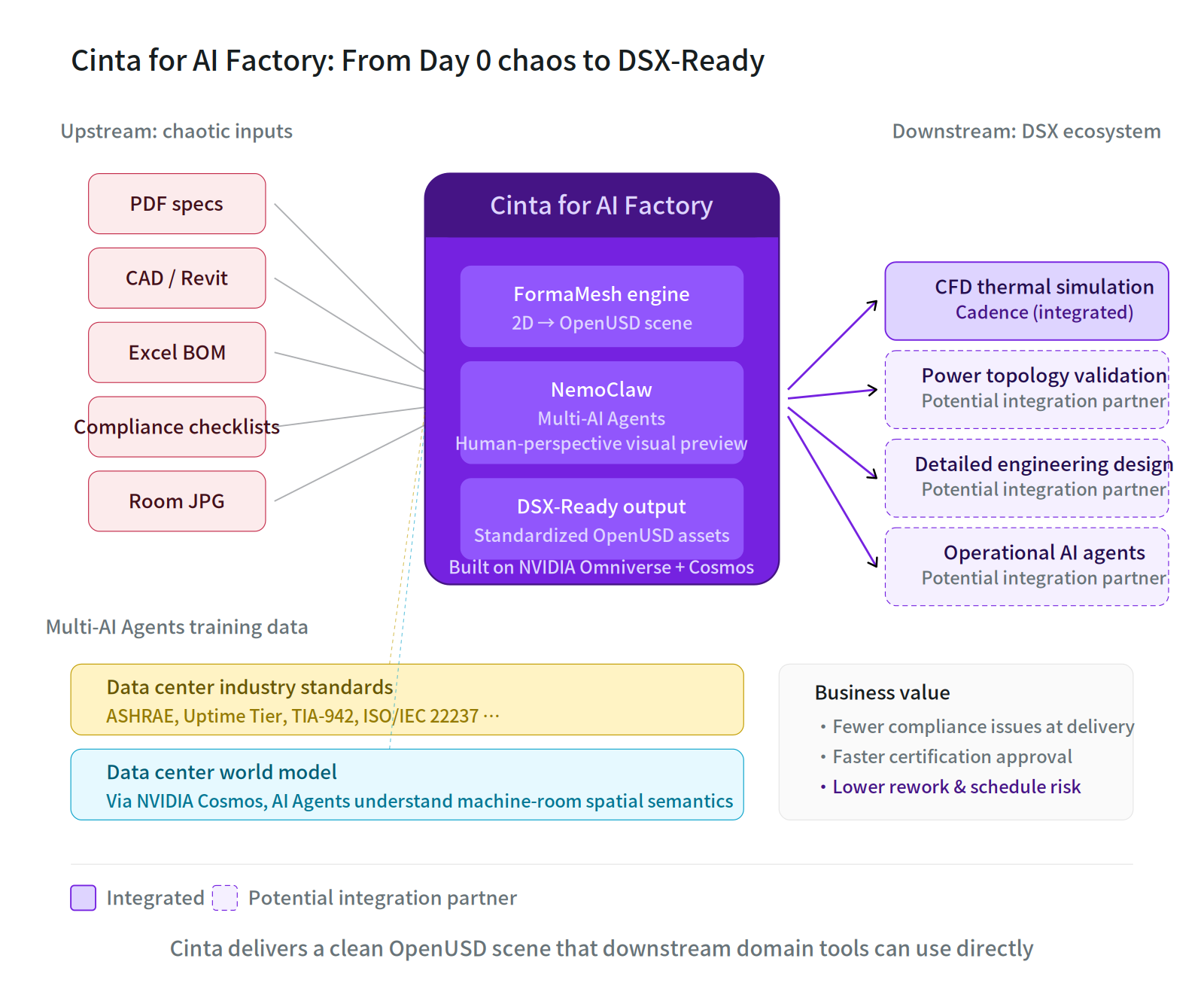

Part III: Where Cinta for AI Factory fits in

Cinta for AI Factory is a Day 0 digital collaboration platform purpose-built for AI data center deployments, built on top of the NVIDIA Omniverse DSX Blueprint + NemoClaw + Cosmos stack. It doesn't solve the simulation problem — it solves the problem before simulation: how to automatically transform scattered, multi-format, cross-team design inputs into standardized digital assets that downstream DSX ecosystem tools can read directly, while providing early-stage simulation predictions to accelerate concept-phase decisions.

This capability breaks down into six layers.

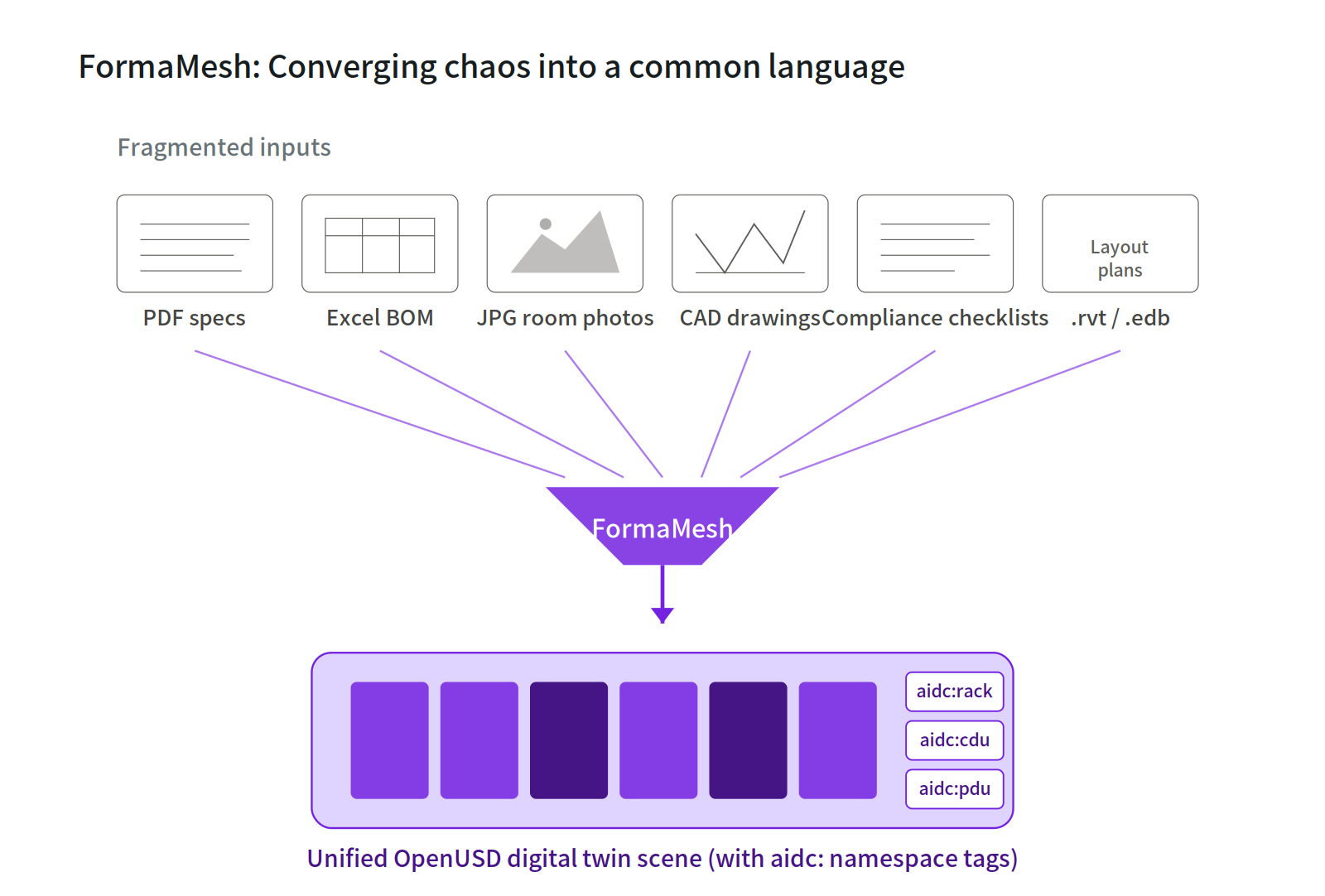

1. OpenUSD as the lingua franca

Cinta treats OpenUSD as the Lingua Franca for cross-disciplinary collaboration. Through the FormaMesh engine, scattered 2D resources (CAD, PDF, JPG) are unified into an OpenUSD digital twin scene tagged with the dedicated aidc: namespace.

Figure 3: FormaMesh engine : Converging multi-source fragmented inputs into a unified OpenUSD scene with aidc: namespace tags.

What does this mean? It means that the four originally siloed disciplines — power, cooling, networking, and architecture — can work in the same real-time shared 3D scene for the first time. Hardware engineers in Taiwan, compliance consultants in the U.S., and owners in Japan achieve zero-latency design alignment on the same canvas via WebRTC streaming.

Data silos are the most underestimated performance killer in infrastructure design. Cinta's strategic choice is clear: instead of building yet another island, open up the channels between existing islands — letting current resources flow again without forcing anyone to abandon what they already have.

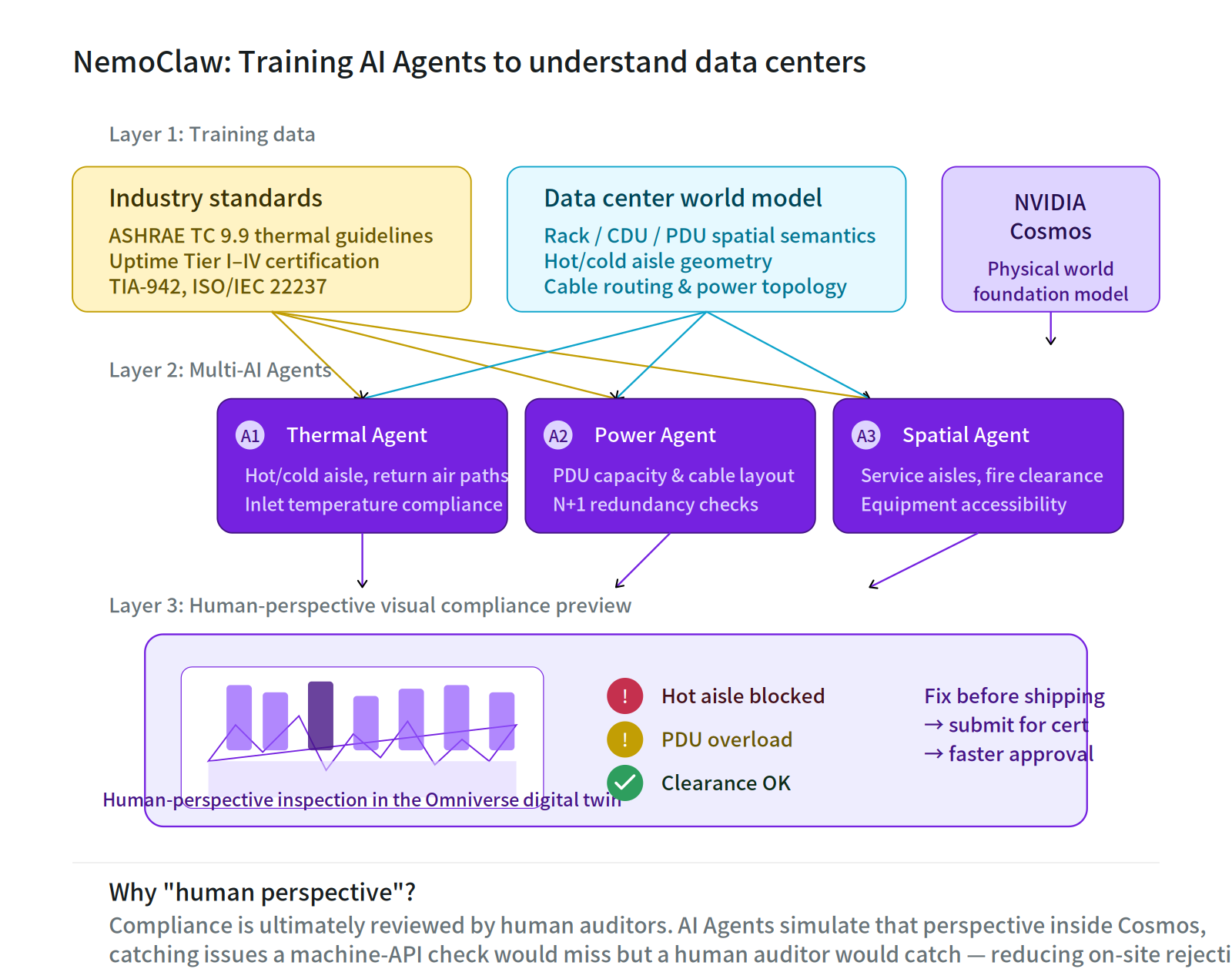

2. NemoClaw: The Multi-Agent Orchestration Layer and Security Backbone

What actually makes Cinta usable on Day 0 is not a single clever AI model — it is whether a team of specialized AI Agents can collaborate, avoid stepping on each other, and remain trustworthy throughout the workflow. Cinta adopts NVIDIA NemoClaw as the underlying Multi-Agent Orchestrator — its role is the command center and safety guardrail, enabling Cinta's specialized Agents to collaborate efficiently. It is not Cinta's source of intelligence.

Figure 4: Cinta Multi-AI Agents and the NemoClaw Orchestration Layer — Specialized Agents trained on a dual-axis structure (industry standards + world model), unified under NemoClaw for orchestration, scheduling, and security gating to perform compliance pre-checks from a human-auditor's perspective.

Specifically, Cinta leverages two capabilities of NemoClaw:

- Orchestrator command capability — letting thermal, power, spatial, and compliance Agents divide work, pass intermediate results, and reference each other within a single task flow, instead of running as isolated silos

- Enterprise-grade security and governance — when Agents read sensitive design data within the digital twin, complete permission boundaries, audit trails, and output gatekeeping are enforced

💡 Cinta's real value is not tied to NemoClaw's intelligence — it is anchored in our "dual-axis training assets for the data center domain" and our "Day 0 workflow." If the orchestration layer ever needs to be swapped out for another orchestrator, Cinta's Agent training assets and workflow logic are fully portable. The product's fate is not bound to the market success of any single upstream platform.

In short, Cinta's bet on NemoClaw is on its currently most mature orchestration and security backbone — a perfect fit for what we need today, but never indispensable.

Cinta's Proprietary Asset: Dual-Axis Agent Training

The Agents' actual "brain" is trained and owned by Cinta itself, with a dual-axis training structure:

Axis 1: Industry Standards Documents

Cinta's AI Agents are deeply trained on the authoritative regulations of the data center domain — ASHRAE TC 9.9 thermal guidelines, Uptime Institute Tier I-IV certification standards, TIA-942, ISO/IEC 22237, and more. This allows the AI to not just "know the rules" but to genuinely understand the engineering intent behind every clause.

Axis 2: Data Center World Model

Through NVIDIA Cosmos's physical world foundation model, Cinta's Agents further learn to "see the machine room" — to understand the spatial semantics of racks, CDUs, and PDUs; the geometric configuration of hot/cold aisles; and how cabling and power distribution topology should be laid out reasonably in 3D space. This is the leap that takes AI from "reading documents" to "seeing space."

The result of this cross-axis training is a fleet of specialized Agents capable of performing visual compliance pre-checks within an Omniverse digital twin from a human auditor's perspective: a thermal regulation Agent inspecting hot/cold aisles and return airflow paths, a power topology Agent verifying PDU capacity and N+1 redundancy, and a spatial layout Agent confirming maintenance walkways and fire-safety clearances. The task dispatch, result integration, and security control between these Agents is handled uniformly by the NemoClaw orchestration layer.

3. Why must it be a "human perspective"?

This is the most critical design choice Cinta made for its Agents.

💡 Compliance certification is ultimately reviewed by human auditors — they walk into the machine room, crouch down to inspect cable management, look up to check return airflow space, walk through maintenance aisles to feel accessibility. If the AI only checks via machine-friendly API formats, it will miss many issues that "humans catch but machine formats can't see."

Cinta's Agents simulate the human auditor's perspective within NVIDIA Cosmos's world model, examining each compliance dimension within the digital twin one by one — and the NemoClaw orchestration layer cross-references findings across multiple dimensions. This delivers three direct business values:

- Reduces compliance issues before equipment leaves the factory — hardware vendors can simulate deployment in the digital world ahead of time and catch potential issues, avoiding spec mismatches discovered only on the customer's site

- Accelerates pass-through after compliance certification submission — when designs submitted to certification bodies have already been pre-screened by AI from a human-auditor's perspective, on-site audits can be dramatically shortened

- Reduces rework and schedule risk — issues caught on Day 0 cost roughly 1% of what they cost when rejected on the construction site

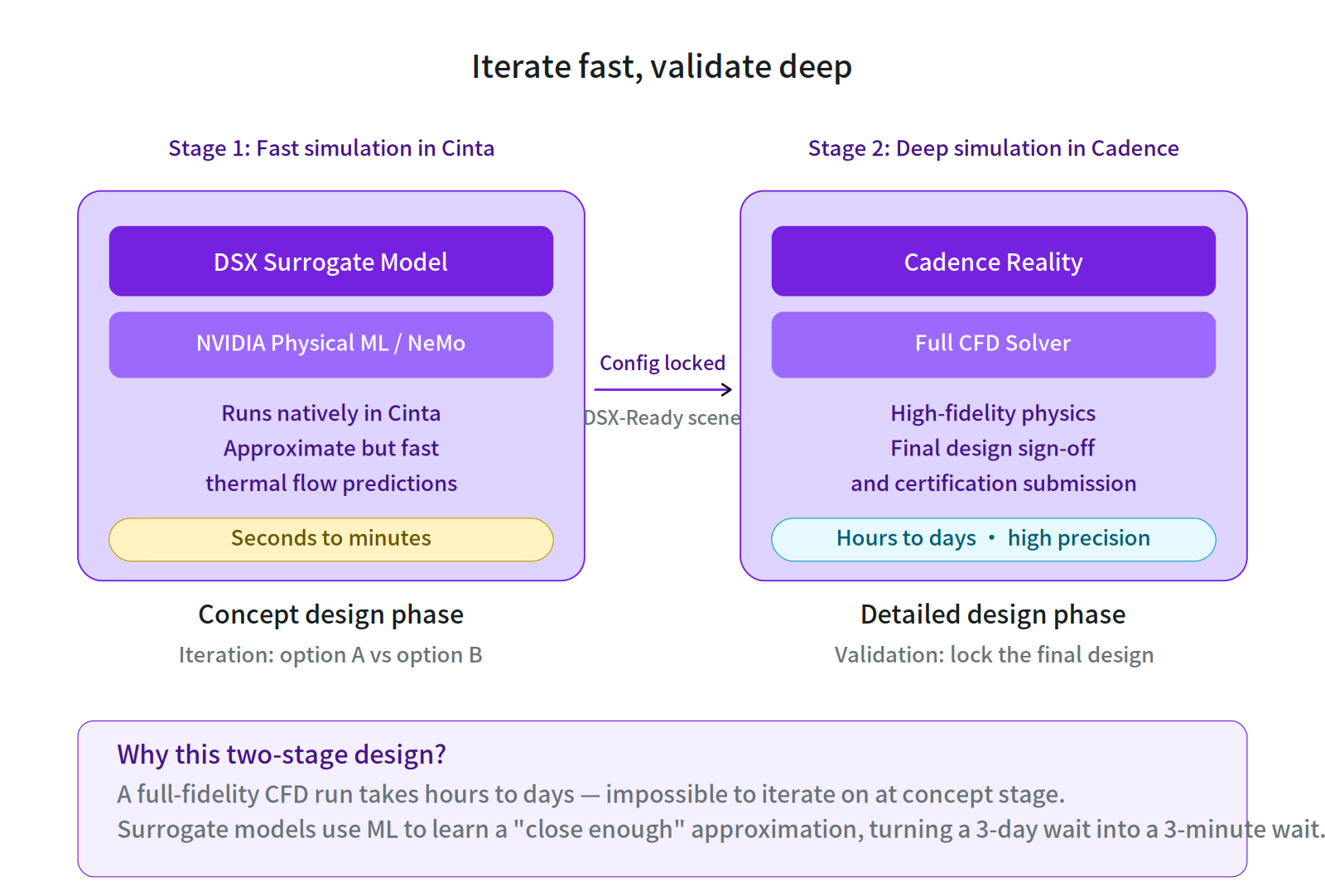

4. Iterate fast, validate deep: surrogate models accelerate concept-stage decisions

Compliance preview alone isn't enough. The other Day 0 pain point is decision velocity — when an owner is weighing "8-row racks vs 10-row racks" or "liquid-cooling zoning option A vs option B," the traditional flow makes them wait until downstream CFD finishes. A full-fidelity CFD run takes hours to days. Iterating at concept stage becomes nearly impossible.

Cinta's solution is to integrate the DSX Blueprint's Surrogate Model directly into the platform. Through NVIDIA Physical ML / NeMo, Cinta runs a fast ML approximation that produces preliminary thermal predictions before a Cadence Reality deep simulation even starts.

Figure 5: Surrogate Model two-stage flow : Rapid concept-stage iteration in Cinta on the left, deep validation by Cadence Reality on the right.

This creates Cinta's two-stage simulation flow:

- Stage 1 (concept design): fast iteration inside Cinta — using the DSX Surrogate Model + Physical ML, Cinta returns approximate thermal-flow predictions in seconds to minutes. Owners can compare option A vs option B rapidly and rule out unsuitable configurations at the concept stage

- Stage 2 (detailed design): deep validation by Cadence Reality — once the concept configuration is locked, the DSX-Ready scene flows into a full CFD solver for high-fidelity physics, used for final design sign-off and certification submission

The surrogate model isn't trying to replace Cadence Reality's high-precision simulation. It's about turning a concept-stage decision from "wait three days" into "wait three minutes." This compresses the AI factory's design timeline by another significant chunk — and it elevates Cinta from a "data preparation platform" to a fast iteration engine for early-stage decisions.

For the NVIDIA ecosystem, this is also evidence of Cinta's deep integration with the DSX Blueprint. Cinta isn't just reading downstream simulator formats — it directly leverages the DSX Blueprint's built-in Surrogate Model assets and applies them at the most critical stage: concept design.

5. Opening Interfaces for Downstream Simulation and Operations

Cinta does not replace any of the specialized simulation tools in the DSX ecosystem — it serves as their perfect upstream data source and integration interface.

After Cinta completes the Day 0 configuration, the NemoClaw orchestration layer commands the Multi-AI Agents through the pre-check process, and the Surrogate Model validates the concept, the platform exports — not static drawings — but a "DSX-Ready" digital twin scene rich with precise physical properties and Bill of Materials (BOM). This high-purity digital asset can be fed directly into downstream functional domains:

- CFD thermal simulation: through the integration with Cadence, OpenUSD scenes can be directly imported for deep thermal analysis (this is the integration already in production today)

- Power topology validation: future integration with specialized electrical-domain validation tools for power topology and short-circuit testing

- Detailed engineering design: future detailed-engineering partners can take over fine-grained planning based on Cinta's baseline model

- Operational AI agents: future cooling-optimization and grid-dispatch agentic AI systems can run continuously on this scene

Figure 6: Cinta for AI Factory's Position in the Ecosystem — Transforming chaotic upstream inputs into standardized assets ready for direct downstream consumption. CFD thermal simulation is already implemented through Cadence integration; the other three domains represent potential integration partners.

This is a critical commercial position: Cinta does not compete with downstream simulation tools — it makes them more usable. From an ecosystem perspective, this is the role of reducing friction and amplifying every partner's value. As Cinta completes integrations with more DSX ecosystem partners, the network effect of this hub position will continue to expand.

6. Setting the baseline for Day 180+ operations

The most critical and most overlooked layer: the digital twin Cinta produces is not just for construction. Once the AI factory goes live, the Baseline Scene Cinta has established becomes the foundational map of the operational digital twin.

Whether it's the self-learning AI agent responsible for cooling optimization or the agentic system executing DSX Flex grid load dispatch, both require a precise, compliance-validated digital mapping of the physical environment to make decisions. Cinta lays the digital infrastructure rails for these high-level operational AIs all the way back on Day 0.

In other words, the quality of Day 0 decisions continues to compound on Day 180, Day 720, and across the entire operational lifecycle of the factory — and this is precisely the long-term role Cinta for AI Factory hopes to take on.

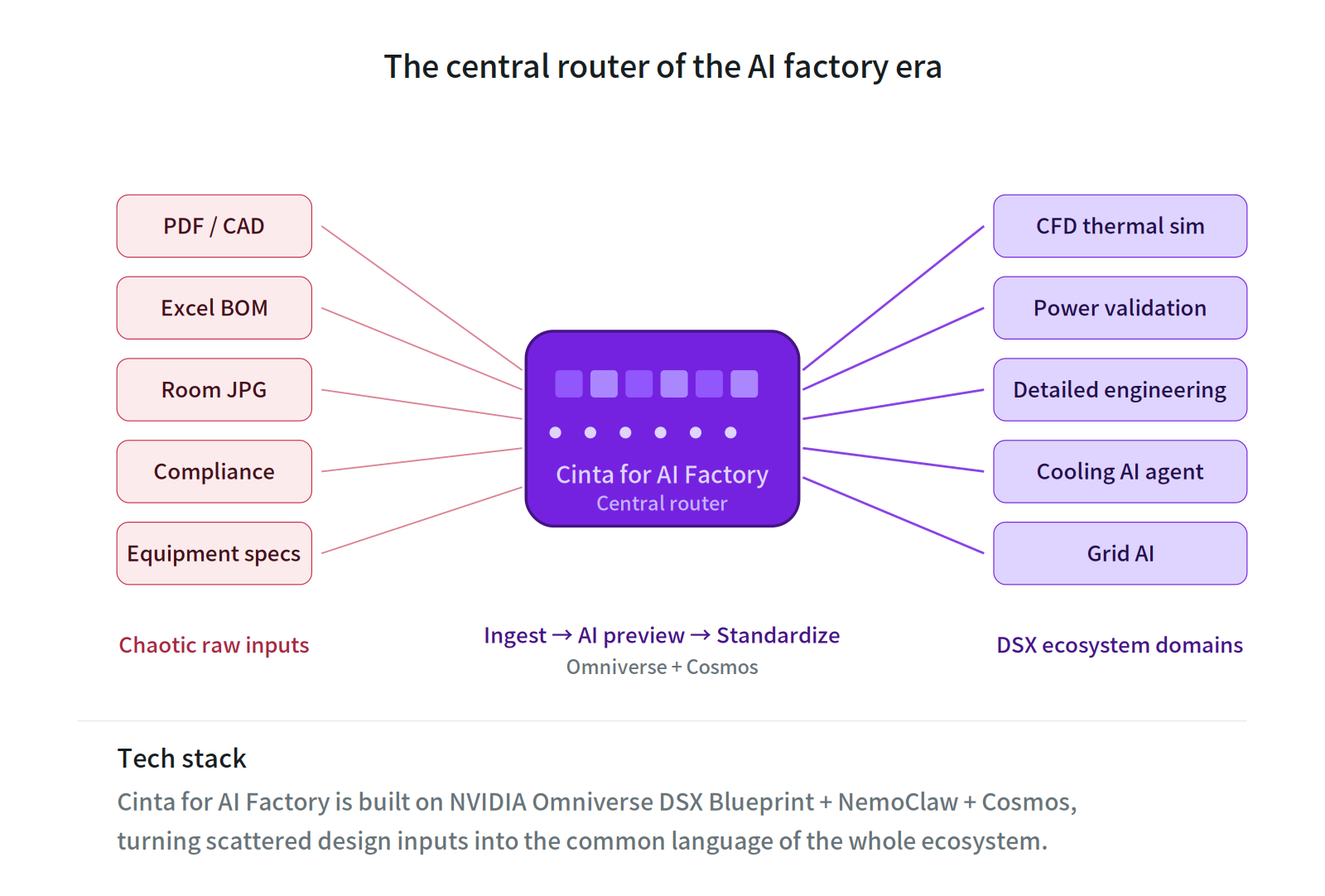

Conclusion: The Central Router of the AI Factory Era

If the NVIDIA DSX Blueprint is a vast and precisely operating digital city, then Cinta for AI Factory is the central router of that city.

Figure 7: The Central Router — Distributing chaotic raw inputs across the entire DSX ecosystem. Cinta for AI Factory is built on NVIDIA Omniverse DSX Blueprint + NemoClaw + Cosmos.

It isn't just about a flashy 2D-to-3D technical capability. Its real platform value lies in this: the ability to ingest the most chaotic, raw design inputs, run human-perspective visual compliance pre-checks through Cinta's Multi-AI Agents under the orchestration of NemoClaw, rapidly iterate concepts via the DSX Surrogate Model, and ultimately convert all of it into OpenUSD-compliant standardized digital assets that any downstream tool can read and deeply simulate.

The AI factory competition of the next decade will not be decided by simulation precision alone, nor by hardware specs alone. It will be decided by the platform that can stitch the entire ecosystem together and let every workflow segment connect seamlessly.

DSX provided the blueprint and defined the downstream standards. But the chaos before the blueprint — the real starting point that begins with PDFs and Excel sheets — needs a different layer of tools to handle. Through deep integration with NVIDIA Omniverse DSX Blueprint, NemoClaw, Cosmos, and Physical ML, Cinta for AI Factory enables AI Agents to read a data center the way a senior auditor does for the first time, and to help designers iterate decisions in seconds during the concept phase — pulling the timelines for industry compliance certification and design freeze decisively forward.

This is the position Cinta has chosen: not to compete with downstream simulation tools, but to fill in the upstream segment that still awaits integration — a segment that profoundly shapes the speed and quality of the entire production line.

Sources: NVIDIA official announcements (2026/3/16), NVIDIA Omniverse DSX Blueprint, NVIDIA NemoClaw, NVIDIA Cosmos, NVIDIA Physical ML / NeMo platform documentation, Agaruda Cinta for AI Factory platform documentation, ASHRAE TC 9.9, Uptime Institute, TIA-942, ISO/IEC 22237 and other data center industry standards.