05.06.2026

When Data Centers Become "Money Printers": The New Industrial Logic of Gigawatt-Scale AI Factories

Infrastructure ・ Deep Analysis | May 2026

When you're staring at a decision that runs $35 billion and takes two to three years to come online, a lot of what we take for granted in software starts to look less obvious. "Move fast and break things" stops being a question of efficiency at this kind of capital-intensive scale — broken things now translate directly into mistakes worth tens of billions. NVIDIA's Vera Rubin DSX and Omniverse DSX aren't fundamentally about making things faster. They're about redefining what counts, in the AI era, as a decision process you can responsibly bet on.

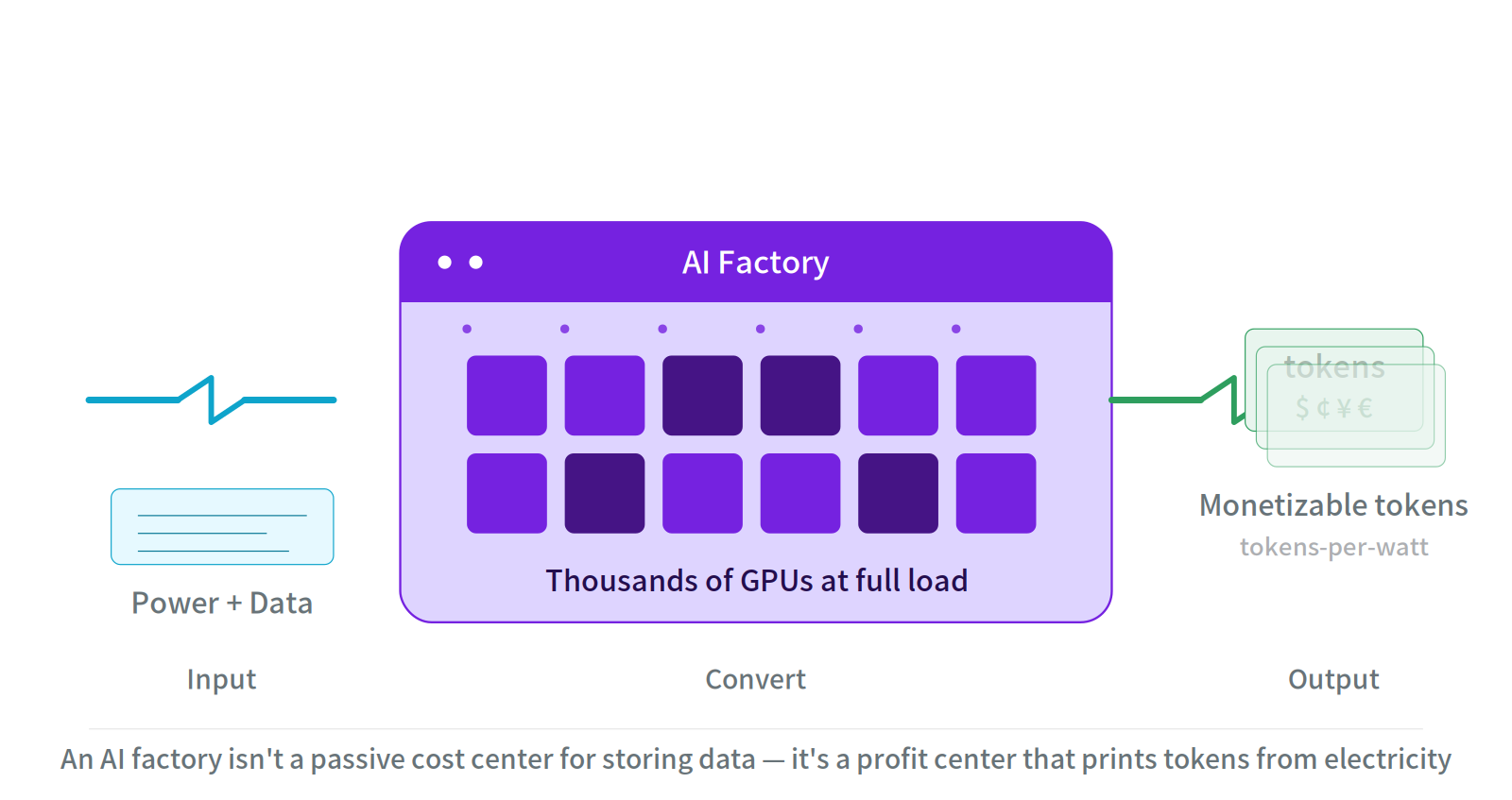

Fig 1: AI factory as production line - Picture an AI factory as an assembly line: power and data go in on the left, glowing GPU cores in the middle, monetizable tokens out on the right.

Prologue: A $50 Billion Engineering Bet

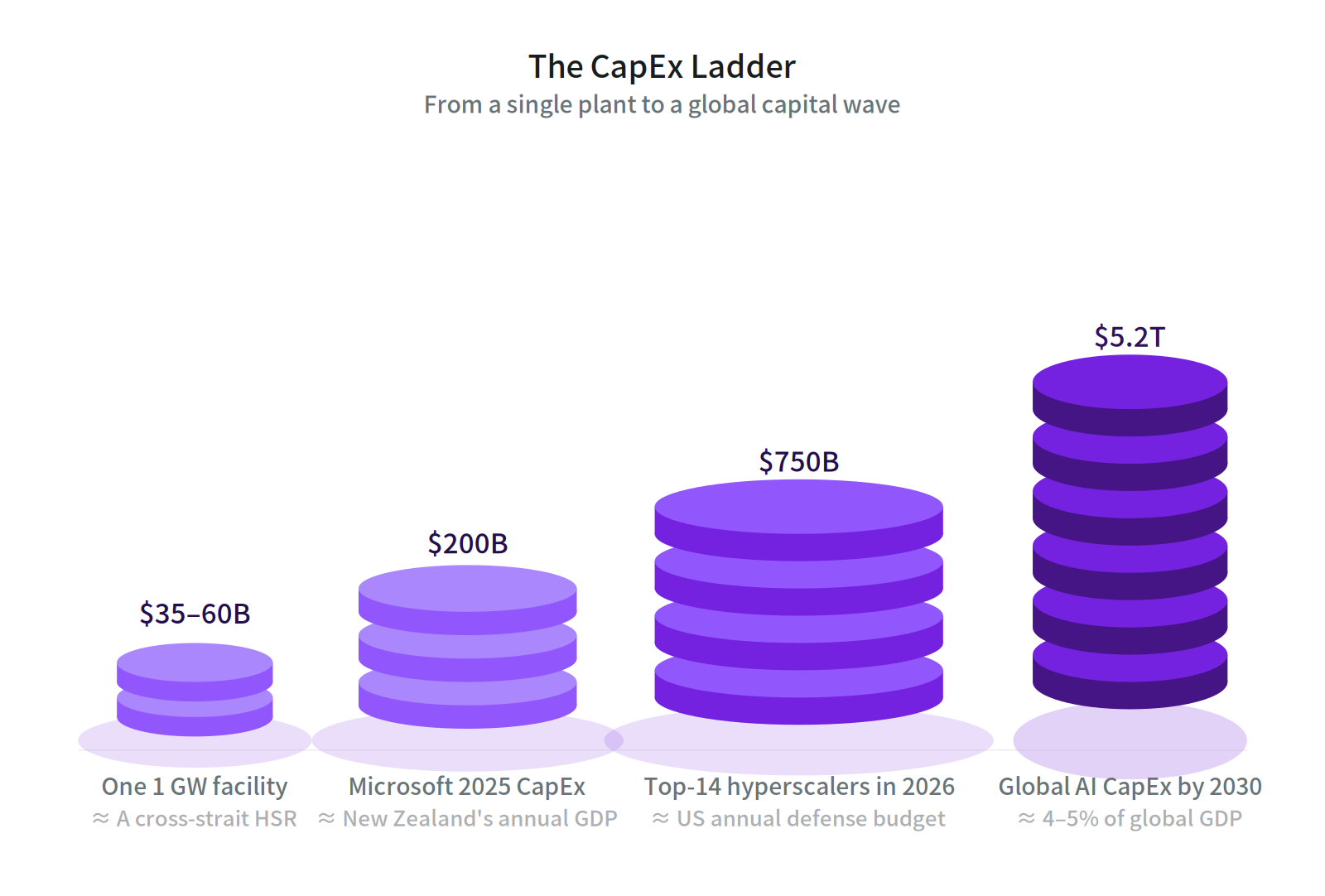

Building a 1-gigawatt AI factory now runs $35–60 billion in CapEx, with a two-to-three-year timeline. But nobody knows what hardware spec customers will demand by the time it opens, where the market will price compute, or whether the grid interconnection will land on schedule. If you build the wrong thing, there's no Plan B.

This is the actual condition of AI infrastructure construction in 2026. McKinsey projects $5.2 trillion in global AI data center investment by 2030. BloombergNEF data shows the top-14 hyperscalers will spend close to $750 billion in 2026 alone — nearly double last year.

| Scale | Amount | Reference |

|---|---|---|

| Per 1 GW facility CapEx | $35–60B | ≈ A cross-strait High Speed Rail |

| Microsoft 2025 CapEx | $200B | ≈ New Zealand's annual GDP |

| Top-14 hyperscalers in 2026 | $750B | ≈ US annual defense budget |

| Global AI CapEx by 2030 | $5.2T | ≈ 4–5% of global GDP |

Put differently: when the stakes scale from "redeploy after a software bug" to "you got a multi-billion-dollar factory wrong," the decision process itself needs to be redesigned. That's why NVIDIA's Vera Rubin DSX reference design and Omniverse DSX blueprint, unveiled in March 2026, aren't really a product launch — they're insurance against the risk of "how do you avoid sending tens of billions down the drain?"

Fig 2: The CapEx Ladder - From a single facility to a global capital wave: AI infrastructure CapEx has crossed into trillion-dollar territory.

Three lenses on the shift: economics, engineering philosophy, and energy politics.

I. From Saving Watts to Printing Tokens

For two decades, the data center KPI was PUE (Power Usage Effectiveness) — a pure cost-control metric. But an AI factory isn't a passive cost center for storing data. It's a production line: power and data in, monetizable tokens out.

A new KPI follows naturally: tokens-per-watt. Or more bluntly, how much money each watt prints per second.

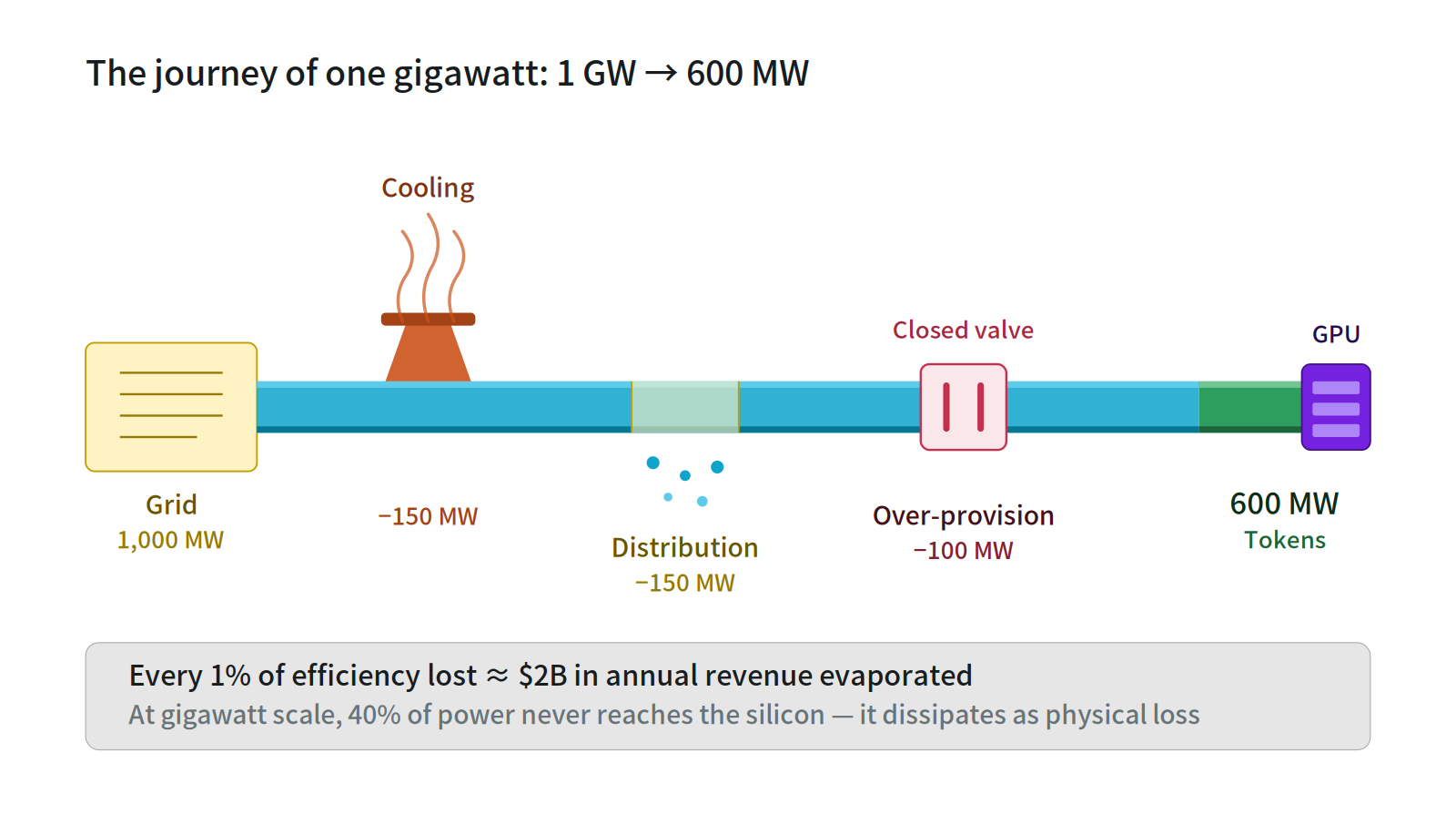

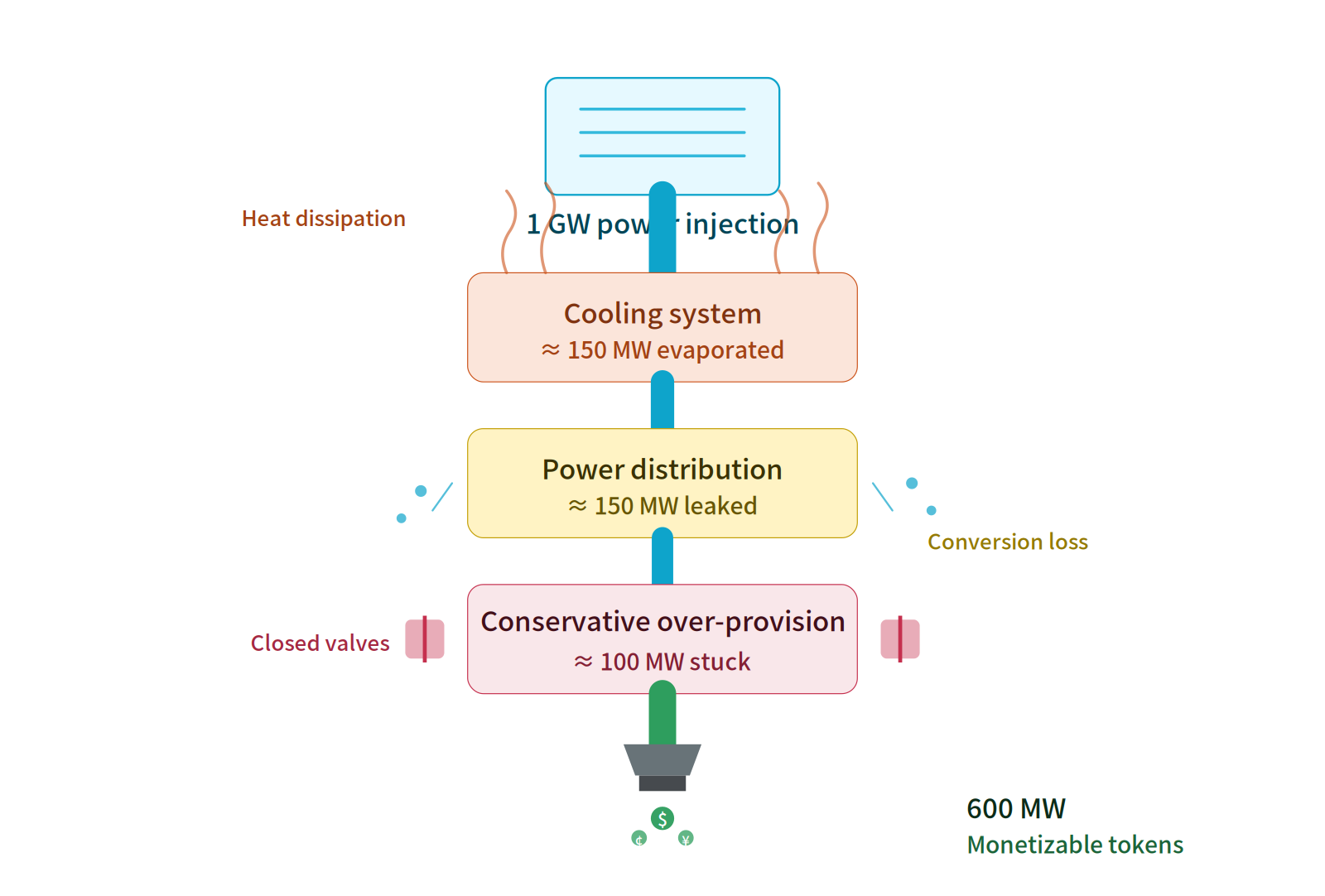

💡 At gigawatt scale, up to 40% of incoming power evaporates before it ever reaches the chips — burned on inefficient cooling, distribution losses, and conservative over-provisioning.

This shift exposes an underappreciated number. In a 1 GW facility, 400 MW never becomes tokens, never becomes revenue. If the facility's potential annual revenue is $200 billion, every 1% of efficiency lost equals $2 billion in evaporated revenue.

Fig 3: Power journey cross-section - Between the grid and the GPU sit three loss points that consume 40% of all incoming energy.

The deeper shift: cost structure inversion

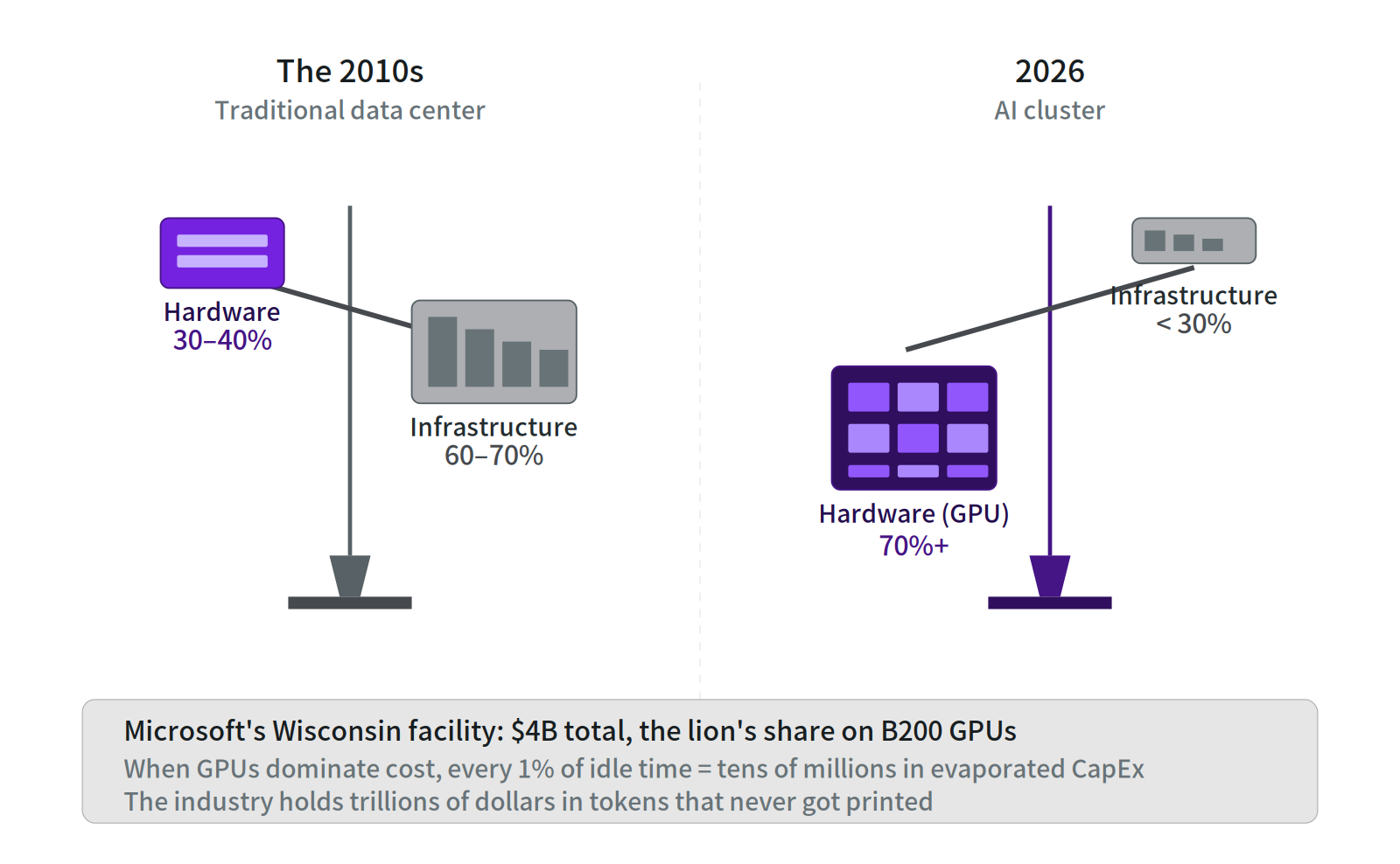

In legacy data centers, hardware was 30–40% of total cost; infrastructure (land, power, cooling) was 60–70%. In 2026 AI clusters, hardware has flipped to 70%+. Microsoft's $4 billion Wisconsin facility puts the lion's share into B200 GPUs — a single high-density B200 rack costs $4 million.

Fig 4: Cost structure seesaw - The seesaw inverts: when GPUs dominate cost, every percentage point of idle time is CapEx evaporated.

The arithmetic is brutal. When GPUs dominate cost, every percentage point of idle time burns tens of millions in CapEx. Yet most colocation data centers run GPU utilization at just 30–50%; even top hyperscalers struggle to hold 60–70% steady. The industry is sitting on trillions of dollars’ worth of tokens that never got printed.

Fig 5: Power as fluid pipeline - Imagine power as fluid: from injection to outflow, the evaporation, leakage, and stuck valves along the way are all dollars.

II. "Simulate First, Then Deploy Perfectly"

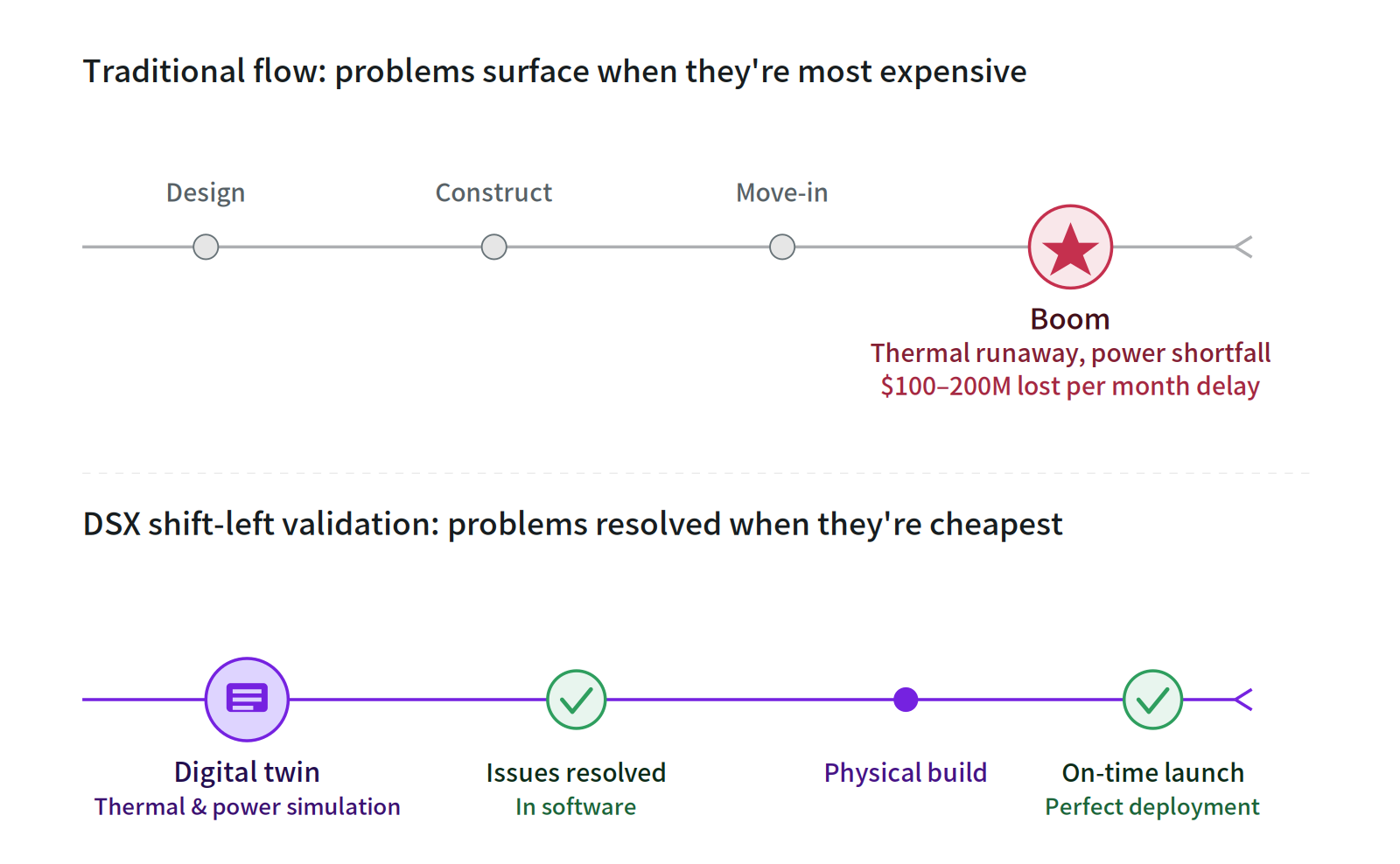

The new generation of Vera Rubin and GB300 systems pushes rack power density to 130–150 kW, with some custom designs exceeding 200 kW. At this density, air cooling stops working entirely. The coupling complexity of power distribution explodes. A single hotspot can throttle the entire GPU cluster.

The old "methodology / process" engineering flow falls apart here — and every month of delayed launch costs $100–200 million in foregone revenue.

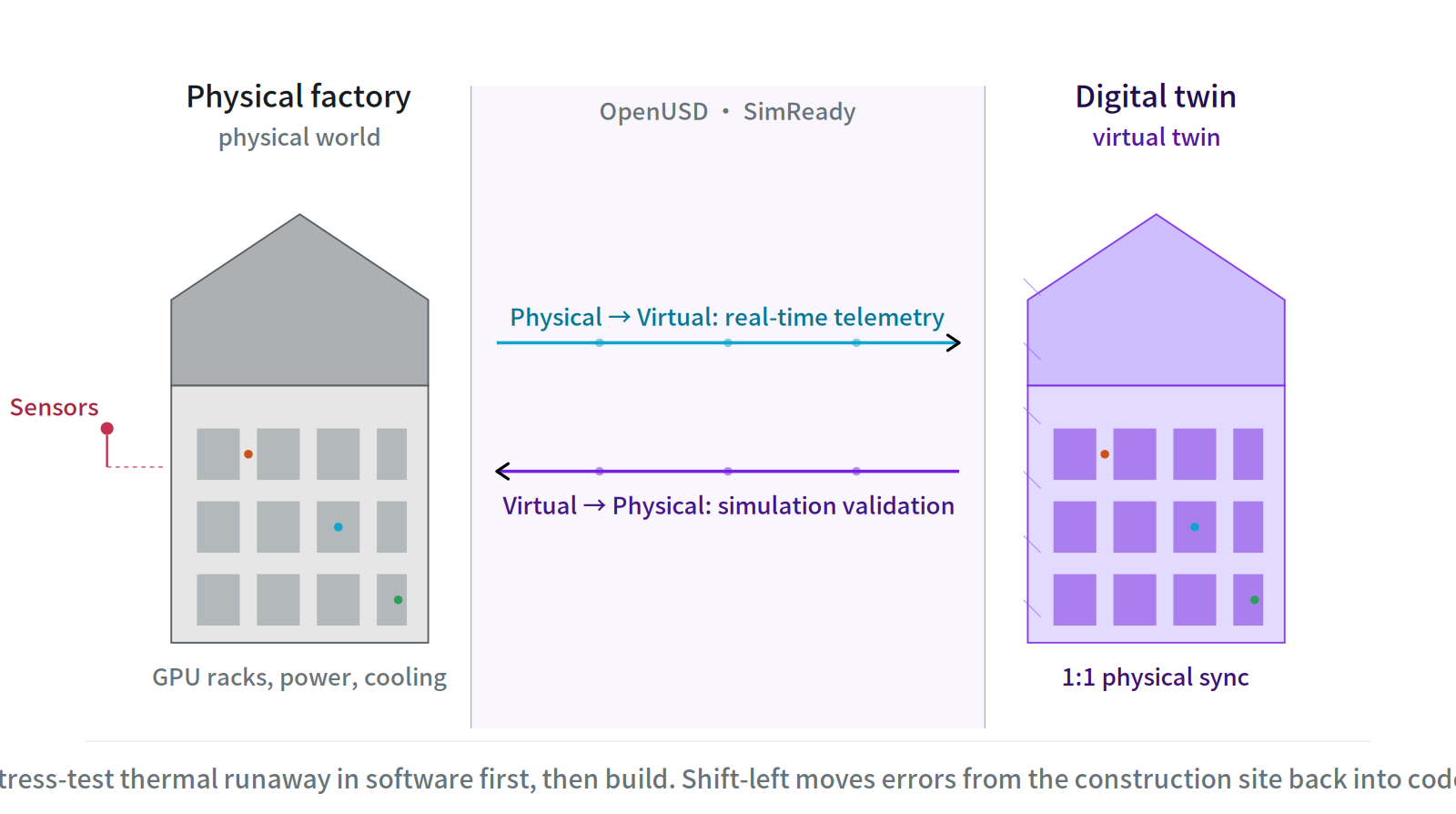

Fig 6: The digital twin's bidirectional sync - every sensor in the physical factory mirrors into the virtual copy, and simulated changes push back to the real world.

Omniverse DSX introduces what the industry calls "shift-left validation" — moving error detection from the expensive construction site back into cheap software simulation. It rests on three pillars:

- OpenUSD as the open standard: Cooling vendors, power distributors, and construction firms collaborate on the same shared 3D scene, ending the era of incompatible CAD files.

- SimReady assets: Every device — from a Vertiv cooling tower to an Eaton power distribution unit — comes with real physical properties (thermal resistance, fluid dynamics, electrical characteristics). Before breaking ground, you can simulate thousands of GPUs at full thermal runaway.

- Full lifecycle digital twin: Once live, physical sensors stream telemetry into the virtual copy. Hardware upgrades or workload reshuffles can be rehearsed digitally before touching production.

This is essentially the first time software engineering’s dev/staging practices have been fully brought into heavy industry.

Fig 7: Shift-left timeline - Traditional flow blows up at the end; DSX flow defuses problems at the start. When you find the error decides what fixing it costs.

And this isn't slideware. The blueprint is already deployed at Switch's 2 GW Georgia facility, the Stargate 1.2 GW project in Abilene, Texas, and the Nscale–Caterpillar multi-gigawatt site in West Virginia — billed as one of the world's largest AI factories.

III. From Grid Vampire to Grid Partner

If you think the bottleneck on AI infrastructure is GPU supply, you're wrong. The real bottleneck is power.

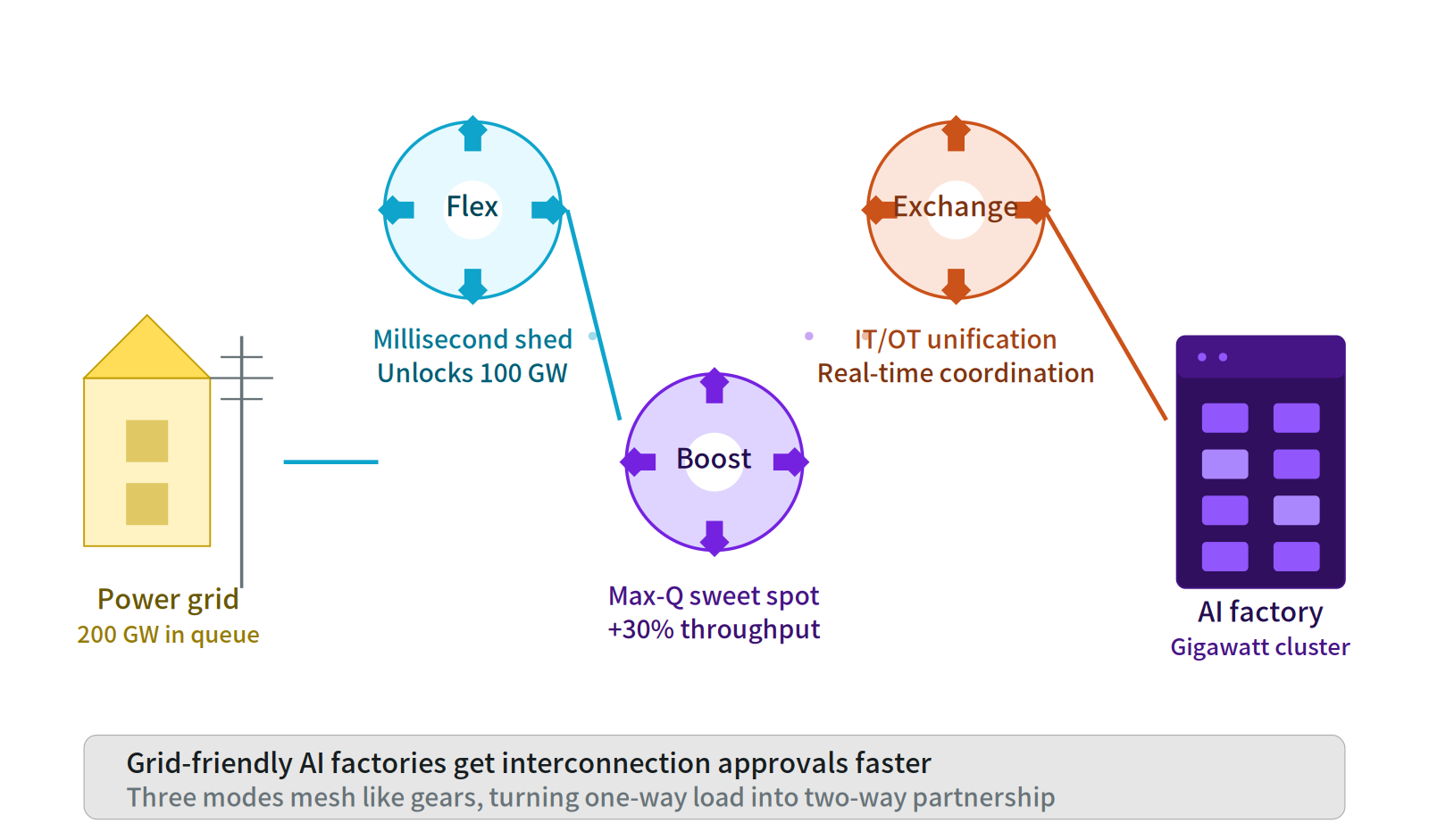

NVIDIA disclosed that the US has more than $300 billion in equipment backlogged and over 200 GW of projects stuck in interconnection queues waiting for power. By 2035, US AI data center demand alone is projected to surge from 4 GW in 2024 to 123 GW — over 30× growth.

⚡ In an era of grid scarcity, "playing nice with the grid" almost decides who gets to build at all.

Historically, data centers have been a problem child for utilities: huge consumption, rigid load profiles, 24/7 saturated demand. Through Agentic AI control layers integrated with partners like Emerald AI, Phaidra, and GE Vernova, Omniverse DSX gives AI factories — for the first time — the ability to coordinate with the grid in real time.

Fig 8: DSX three-gear grid collaboration - Flex unlocks grid capacity, Boost lifts performance per watt, Exchange bridges the data silo between IT and OT.

The regulatory implication is straightforward: grid-friendly AI factories get interconnection approval faster. In an era of capacity scarcity, that almost decides who gets to build and who has to wait.

IV. The "Land Deed" of the AI Era

Every few years, the IT industry produces another standard that promises to unify everything. Most end up as footnotes in old slide decks. DSX does not belong on that list.

What sets it apart is not what it offers engineers, but what it offers the people who sign the checks. A hyperscale data center is a multi-billion-dollar bet amortized over a decade or more; choosing the wrong standard means betting wrong on the next ten years. And DSX has the backing to make that bet feel smaller: Cadence, Dassault, Schneider, Siemens, and Vertiv are already inside the ecosystem; the specification is an open blueprint rather than a walled garden; and gigawatt-scale deployments are already in the ground.

This one deserves serious attention—not because it is technically the most elegant, but because it lowers the stakes.

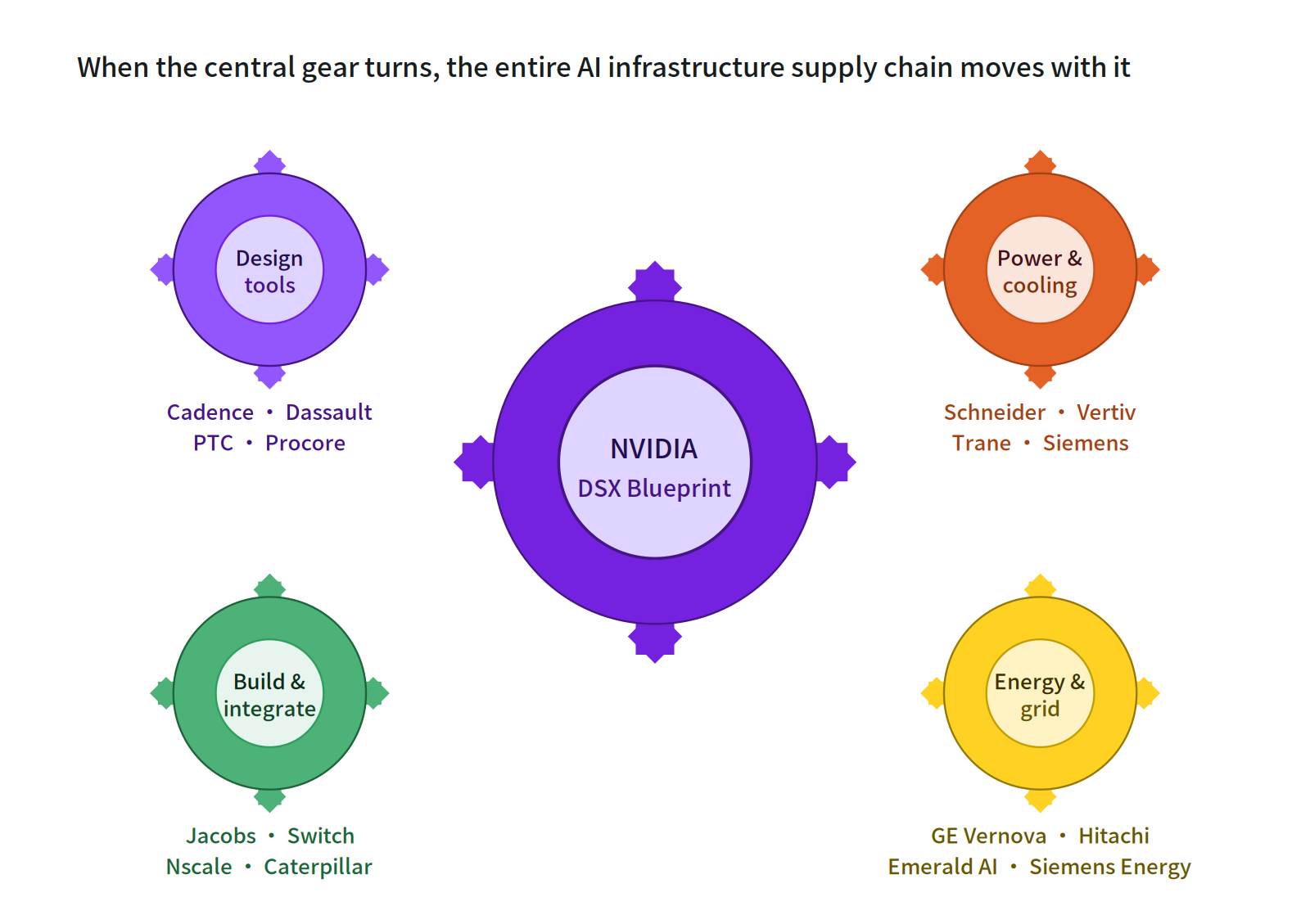

Fig 9: Ecosystem gear coupling - When the central gear turns, the entire AI infrastructure supply chain moves with it.

This is the same transition that gave construction its CAD and semiconductors EDA — for an industry to graduate from craft to industrialization, it has to pass through standardized tooling.

The competitive rule for the next decade has changed. It's no longer "who can build the bigger factory." It's "who can pre-clear all physical risk in the digital world, then squeeze maximum economic output from every watt". Players still believing in "build and adjust" and gut feel may find their ticket is expensive — and already invalid.

Sources: NVIDIA official announcement (March 16, 2026), NVIDIA Developer Blog, BloombergNEF, McKinsey, Bernstein Research, Innovation Endeavors, Tom's Hardware, Data Center Dynamics.