05.12.2026

From CFD to Surrogate Models: Real-Time Physics for Digital Twins

What you will learn

- Why digital twins need surrogate models

- The evolution of surrogates and representative applications

- PhysicsNeMo implementation and the RL integration direction

1. Digital twins need real-time physics

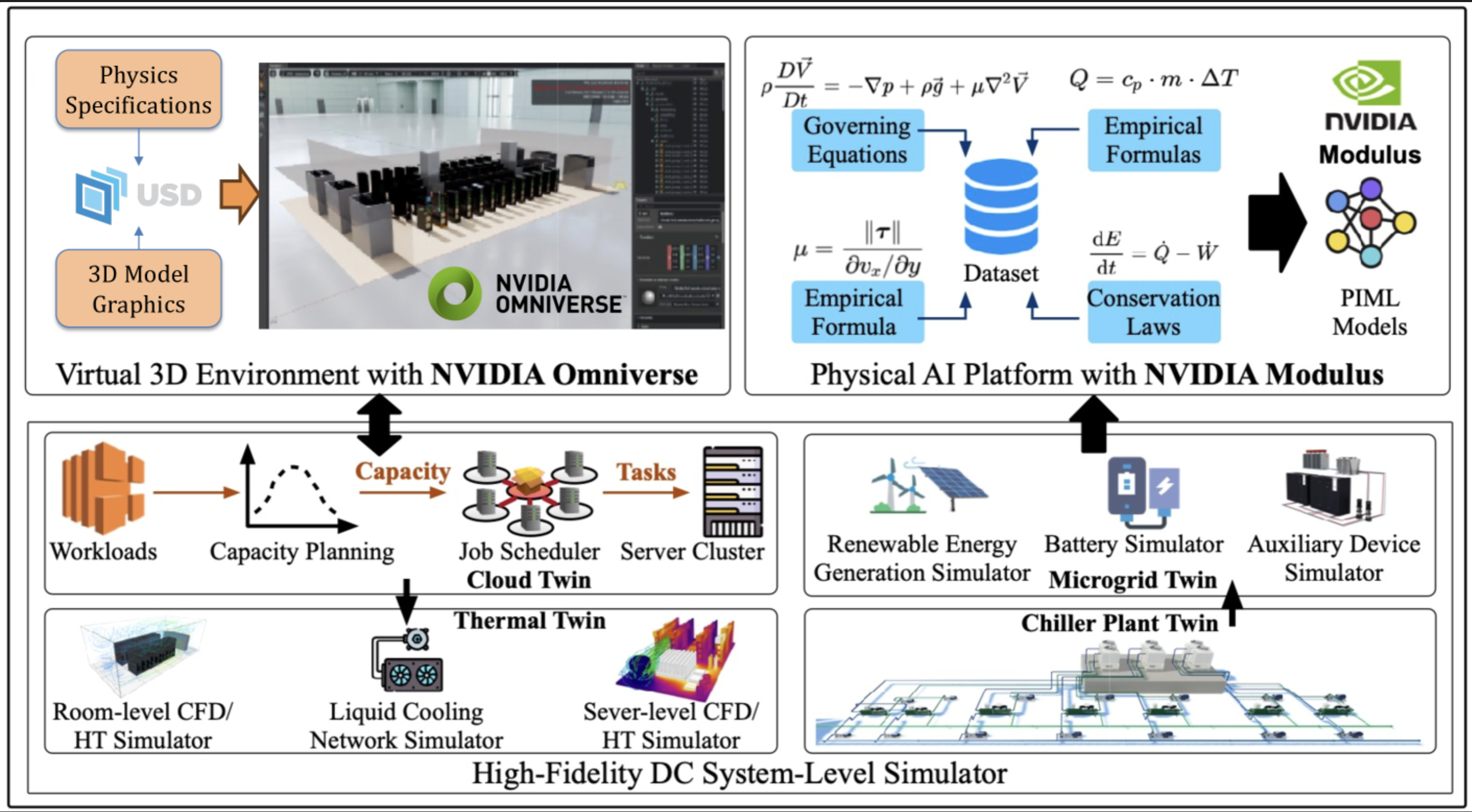

At the March 2026 GTC DC keynote, Jensen Huang unveiled NVIDIA Omniverse DSX Blueprint, outlining the vision for future AI factories: AI agents first train in a digital twin (virtual environment) before deployment to the physical factory, managing power, cooling, and workloads 24/7. Because the capital and operational scale of gigawatt-class factories makes online trial-and-error infeasible (Creating AI agents for gigawatt-scale AI factories with NVIDIA Omniverse DSX Blueprint), the fidelity of the digital twin's internal physics simulation becomes the linchpin of the entire blueprint. If the simulation diverges from the real factory's physical behavior, strategies the agent learns in simulation will fail once deployed.

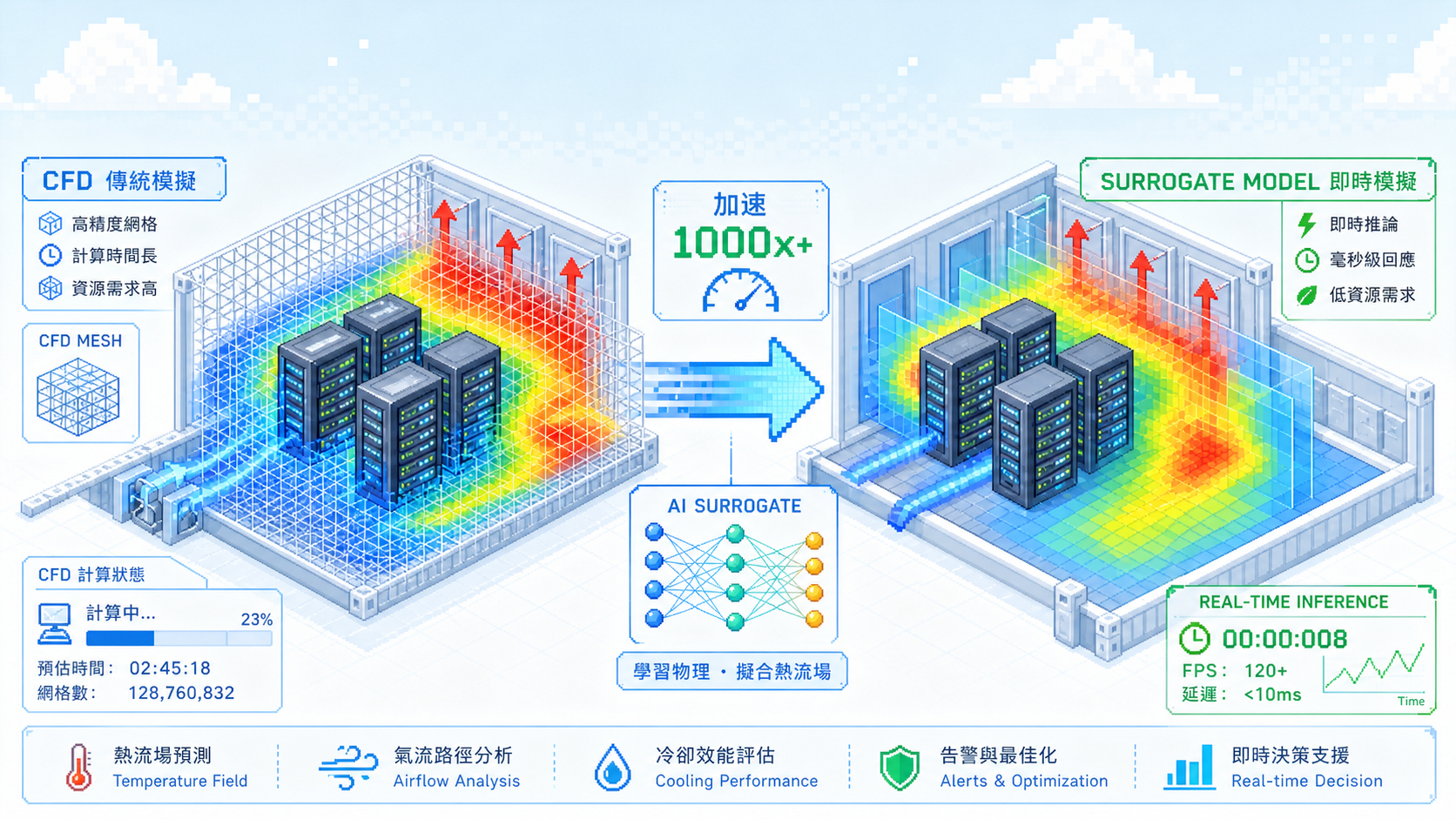

Figure 1: NVIDIA Physical AI architecture. PhysicsNeMo (Modulus) is NVIDIA's physics-informed ML training framework, producing surrogate models that replace the slow CFD inference inside Thermal Twin. Source: Transforming Future DC Operations via Physical AI

Take Thermal Twin as an example. To make cooling decisions, an agent needs the temperature, airflow direction, and hotspot distribution at every location in the data hall, that is, a complete 3D spatial field. Physical sensors only measure the current state at a handful of points and cannot extrapolate how the system responds to changes in control parameters. Spatial-field reconstruction and what-if scenarios both rely on physics simulation.

2. Traditional CFD and the speed bottleneck

2.1 CFD

The engineering standard is CFD (computational fluid dynamics). The solver discretizes the computational domain into millions of grid points and solves the Navier-Stokes equations (together with energy conservation and a turbulence model) at each point, producing the full velocity, temperature, and pressure fields. With proper calibration, CFD's temperature prediction error can be kept below 1°C, enough to support engineering certification.

On the commercial side, the field is dominated by long-established suites such as ANSYS Fluent, Siemens STAR-CCM+, and Dassault Simulia, widely used in aerospace, automotive, and energy design workflows. For data centers, Cadence Reality Digital Twin Platform has emerged recently, integrated into NVIDIA Omniverse for data hall thermal analysis. On the open-source side, OpenFOAM is the mainstream choice, and the case study in NVIDIA's Physical AI paper also uses OpenFOAM to generate its training dataset.

Figure 2: CFD simulation scene inside an Agaruda data center container. Source: simulated by Agaruda DC in Cadence Reality Digital Twin Platform and rendered in NVIDIA Omniverse.

2.2 The CFD bottleneck

CFD's biggest problem is speed, which makes it a poor fit for the repeated-query pattern of a digital twin. A single CFD solve on a hyperscale DC takes hours or even days. Training a surrogate model requires hundreds of CFD runs, so the cumulative cost becomes more pronounced: NVIDIA (2025) used Latin Hypercube sampling across an 18-24°C supply-air temperature range and 1000-2000W server power range to collect 500 runs. Add transient simulation (hotspots evolving over time) and each time step becomes its own independent solve, scaling the cost further.

CFD's accuracy supports offline engineering decisions, but its solver architecture cannot meet the needs of a real-time decision loop. Surrogate models are the technical direction developed to address exactly this bottleneck.

3. The evolution of surrogate models

A surrogate model (Wikipedia) replaces the CFD solver with a cheaper approximation: it uses a small number of CFD runs to learn the mapping from input conditions to physics fields, trading some accuracy for several orders of magnitude of speedup. This is precisely the role PhysicsNeMo plays inside Thermal Twin in Figure 1. This section walks through the two foundational generations of surrogate models in chronological order.

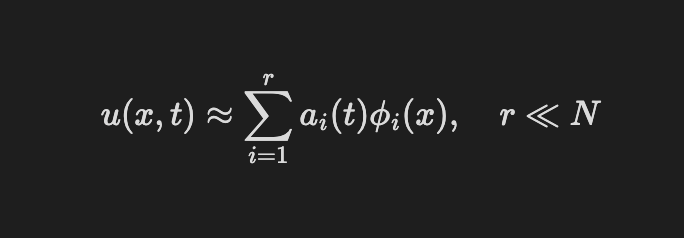

3.1 POD and Gaussian processes

The earliest generation does not use neural networks. POD (Sirovich 1987) applies SVD to a batch of CFD snapshots to extract the most important spatial modes, so a new flow field can be expressed as a linear combination of a small number of modes:

A flow field with millions of degrees of freedom can be reconstructed with only a dozen or so coefficients on the POD basis. Around the same time, Kriging/GP (Sacks et al. 1989) and Bayesian optimization (Jones et al. 1998) established the paradigm of using statistical models to learn mappings from input parameters to scalar outputs, and they remain integrated in the reduced-order model modules of CFD tools such as ANSYS and STAR-CCM+.

Limitations: POD's linear dimensionality reduction suffers mode explosion on nonlinear features (the Kolmogorov n-width barrier), and GP only outputs scalars. Neither can predict a full 3D physics field in one shot.

3.2 CNNs: deep learning enters physics simulation

A 3D physics field is essentially a multi-channel 3D image, with each grid point storing values such as temperature and velocity, making it a natural fit for CNN-based architectures. Guo, Li & Iorio first applied CNNs to steady-state flow prediction in 2016 (community reimplementation: Steady-State-Flow-With-Neural-Nets), encoding geometry with a Signed Distance Field and achieving roughly a 100× speedup over CFD. Hennigh (2017) extended this line by adopting the U-Net (Ronneberger et al. 2015) architecture with a binary boundary representation, compressing a single 2D airfoil Lattice Boltzmann simulation from 38 seconds to 0.05 seconds. PINNs (Raissi et al. 2017) took a different route by incorporating PDE residuals into the loss function, making the model satisfy the physics laws at training time.

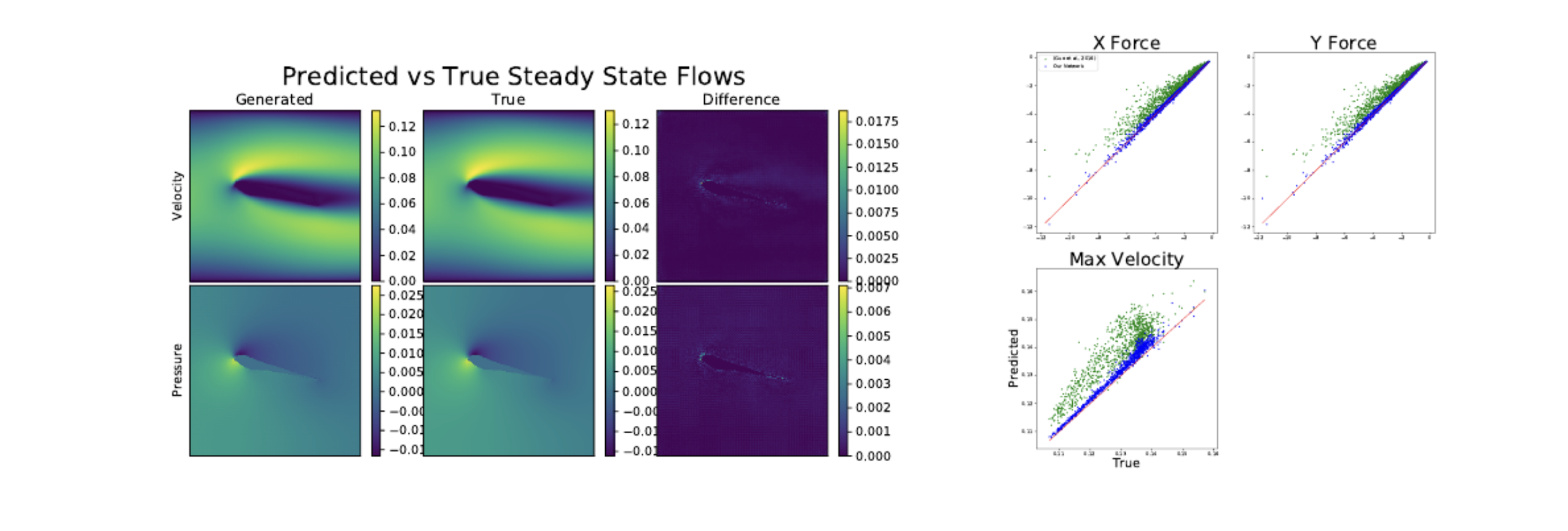

Figure 3: CNN prediction (left) compared with Lattice Boltzmann ground truth (middle); difference on the right. The velocity and pressure fields around the airfoil match the CFD solution closely. Source: Hennigh 2017 (arXiv:1710.10352) Figure 3.

Limitations: CNNs depend on regular grids, introducing staircase approximations on high-curvature geometry; training and inference resolution must match, so cross-resolution inference is not possible.

Several architectures have since emerged to break past the structural limits of the CNN generation. Neural Operators learn the operator itself, making inference resolution-independent. GNNs learn directly on CFD's native unstructured mesh, well-suited to complex geometry. Foundation models acquire physics priors through cross-domain pre-training for better generalization. We leave detailed treatment of these to future posts.

4. Surrogate models across application domains

4.1 Data centers: technical paths for Thermal Twin

Data centers require both a high-precision 3D temperature field and a real-time control interface. Two technical paths have emerged in practice, corresponding to CNN and GNN architectures respectively.

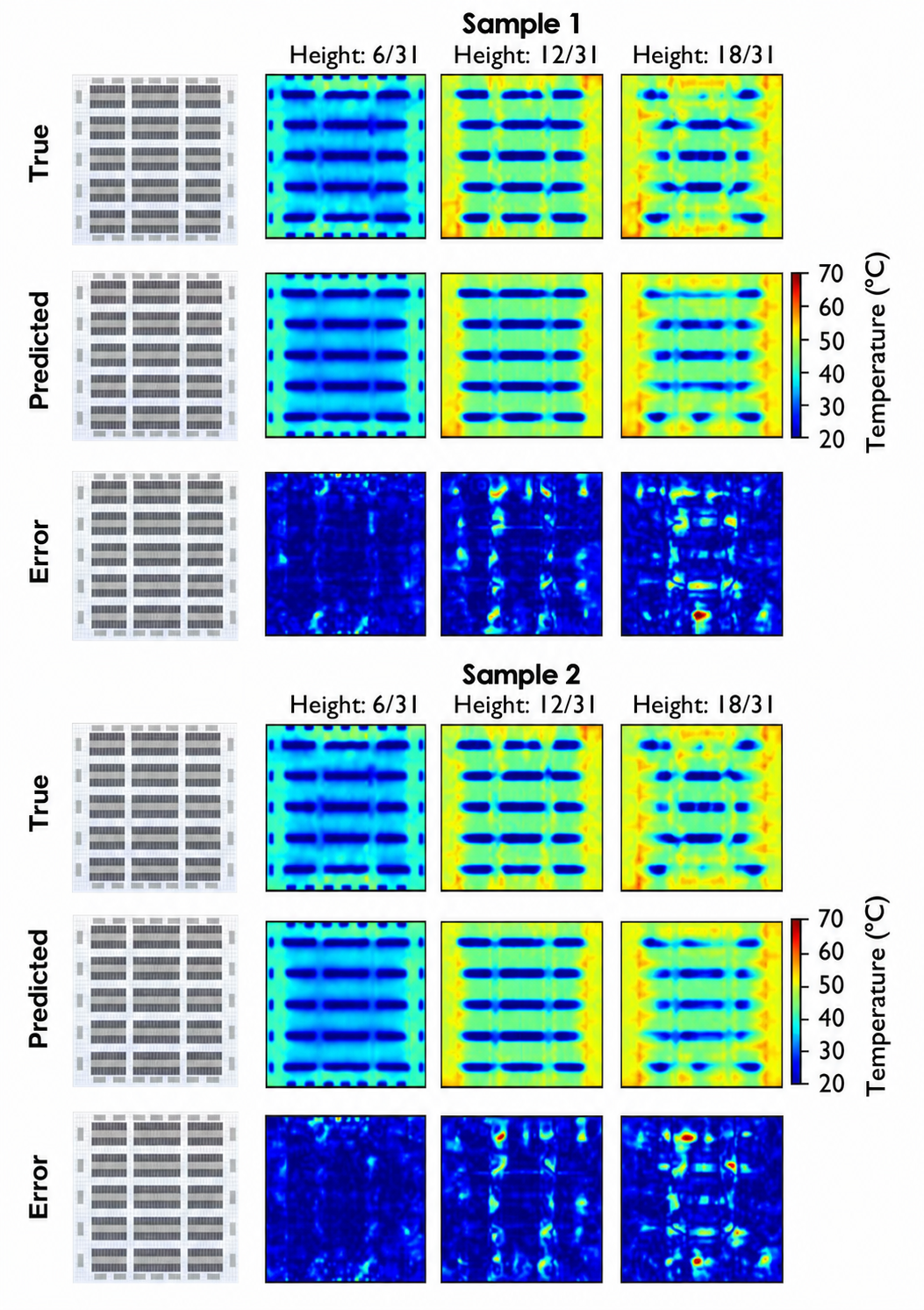

CNN path: Sarkar et al. (2024) discretize the data hall into a 3D voxel grid, use geometry, heat sources, and supply-air conditions as multi-channel inputs, and output the full 3D temperature field. The paper benchmarks six architectures (3D U-Net, Residual U-Net, U-Net++, Swin-UNETR, Fourier Neural Operator, and others) for vision-based DC thermal surrogates.

Figure 4: CNN temperature-field predictions across different data-hall sections. The top three rows are CFD ground truth, the middle three are CNN predictions, and the bottom three are error maps. Predictions at each vertical height slice align closely with CFD, with the main errors concentrated near hotspot boundaries. Source: Sarkar et al. 2024 (arXiv:2511.11722) Figure 8.

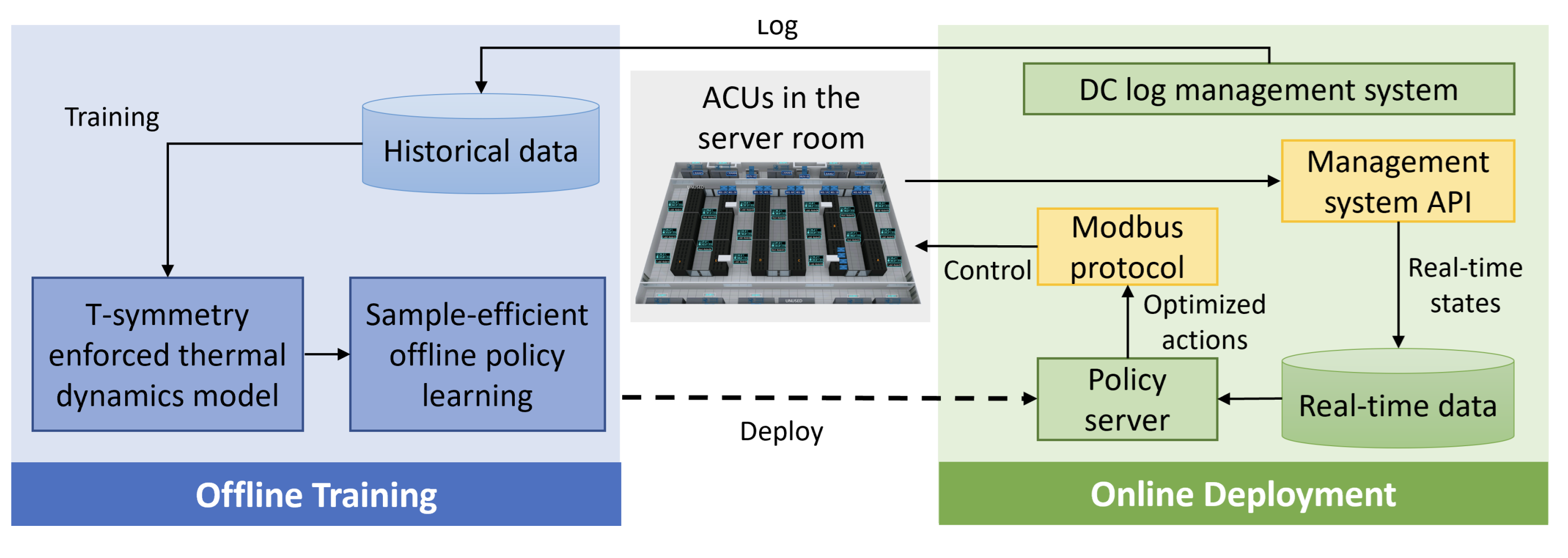

GNN path: Zhan et al. (ICLR 2025) apply a physics-informed GNN directly on CFD's native unstructured mesh to model data hall thermal dynamics, combined with offline RL for cooling policy optimization. The system was deployed to a production environment in 2024 and has accumulated over 2,000 hours of operation, delivering 14-21% energy savings.

Figure 5: The physics-informed GNN + offline RL architecture from Zhan et al., which models heat conduction on mesh nodes, with the RL policy trained against the surrogate model as its environment simulator. Source: Zhan et al. 2025 (arXiv:2501.15085).

4.2 Other domains: automotive aerodynamics, weather forecasting, aerospace design

Surrogate models are not limited to data centers.

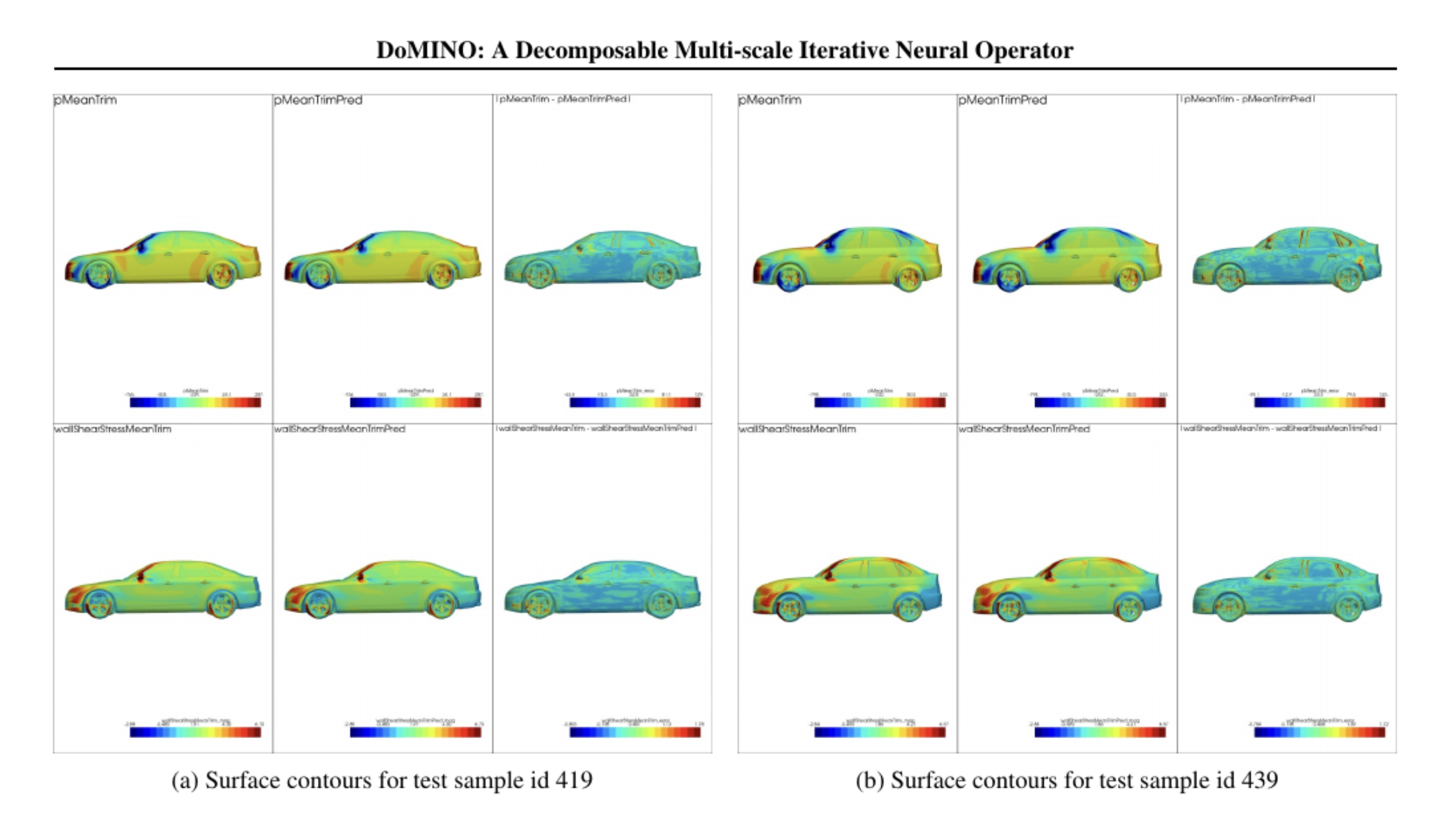

Automotive aerodynamics is another textbook 3D physics-field problem. NVIDIA DoMINO (2025) uses a decomposable multi-scale neural operator to predict body surface pressure and friction fields on the DrivAerML dataset, compressing a single CFD run from hours to seconds.

Figure 6: DoMINO body-surface pressure contours on DrivAerML test samples. The left and right groups each show three viewing angles; the color bar represents pressure coefficient. Surrogate predictions match the CFD ground truth closely across the entire body surface. Source: Ranade et al. 2025 (arXiv:2501.13350) Figure 6.

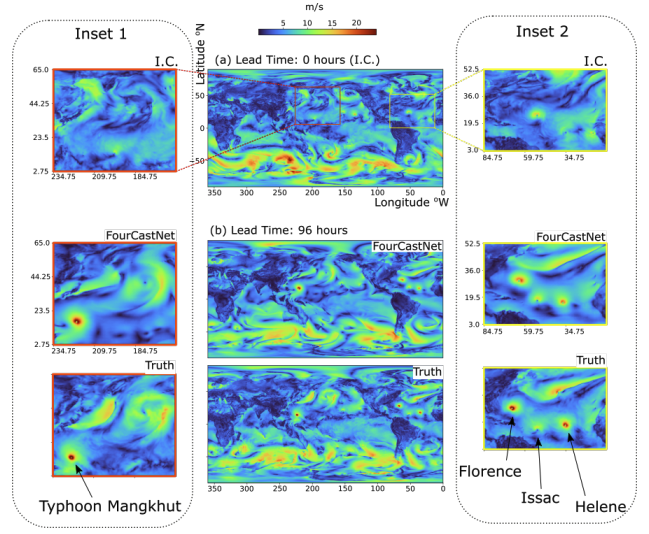

Weather forecasting is one of the domains most reshaped by neural operators. FourCastNet (2022) uses an FNO variant at 0.25° resolution to produce 7-day forecasts in 7 seconds, matching the accuracy of numerical weather prediction (NWP) while compressing inference from hours to seconds. GraphCast (2023) trains a GNN on ERA5 reanalysis and beats the operational IFS HRES on multiple variables in ECMWF's evaluation. AIFS (2024) is ECMWF's in-house AI forecasting system, now part of their official product line.

Figure 7: FourCastNet's 36-hour global total precipitation (TP) forecast compared with ground truth. The two insets on the left and right show zoomed regions where FourCastNet accurately captures tropical cyclone structure and mid-latitude precipitation bands. Source: Pathak et al. 2022 (arXiv:2202.11214) Figure 3.

Aerospace design is where surrogate models were first commercialized. Sweeping airfoil and turbine-blade parameter spaces with POD/GP plus Bayesian optimization remains integrated in the reduced-order model modules of mainstream commercial CFD tools today.

5. Choosing a surrogate model framework

Several open-source frameworks for physics simulation exist, each with its own focus:

- NVIDIA PhysicsNeMo: an industrial-grade multi-architecture framework covering CNN, FNO, GNN, Diffusion, and PINN, with integrated Omniverse visualization and GPU optimization.

- DeepXDE: the most widely used open-source framework in the PINN academic community, suited for rapid prototyping.

- Neural Operator Library: maintained by the FNO authors, covering FNO, TFNO, GINO, Codomain Attention, and other operator-learning variants.

- PyTorch Geometric / DGL: general-purpose GNN frameworks, the standard choice for mesh-based physics simulation.

- JAX-CFD / PhiFlow: differentiable CFD frameworks that make the physics solver itself differentiable, enabling joint training with NNs.

The implementation in this post uses NVIDIA PhysicsNeMo:

- Broadest architectural coverage, with CNN, FNO, GNN, and Diffusion in a single framework.

- Built-in datacenter reference pipeline, usable as a starting point.

- Native integration with Omniverse and SimReady.

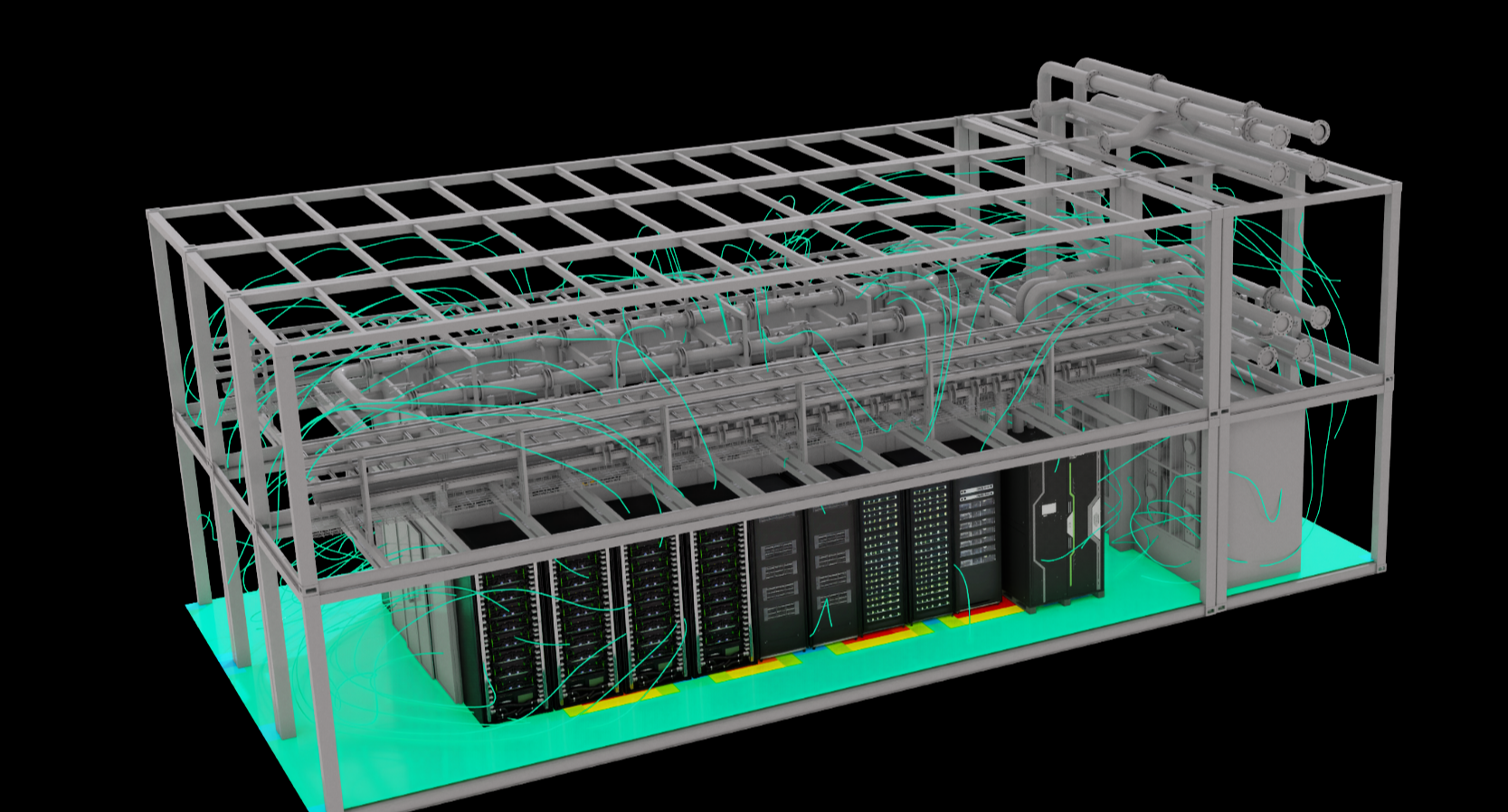

6. Agaruda's PhysicsNeMo experiments

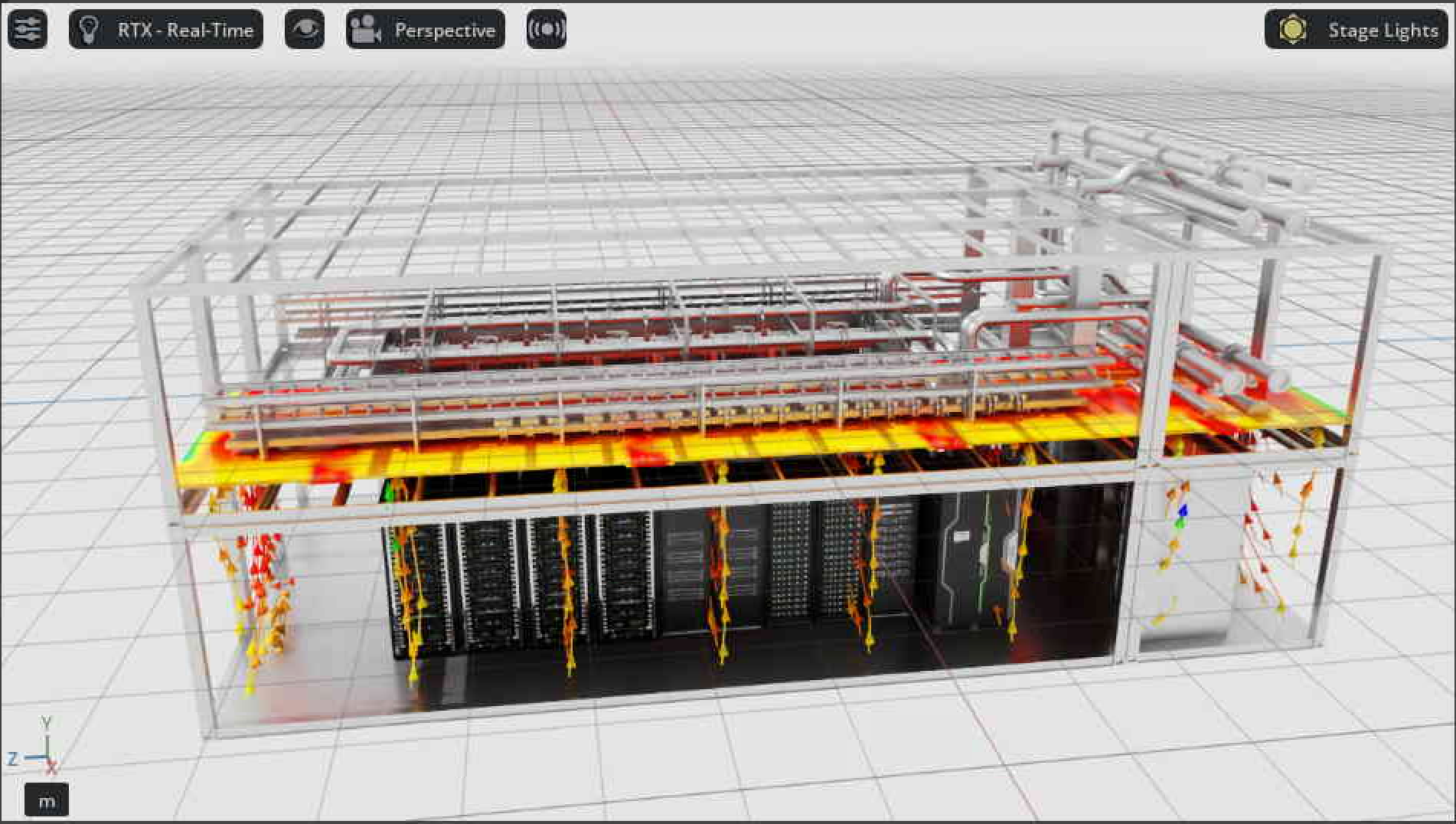

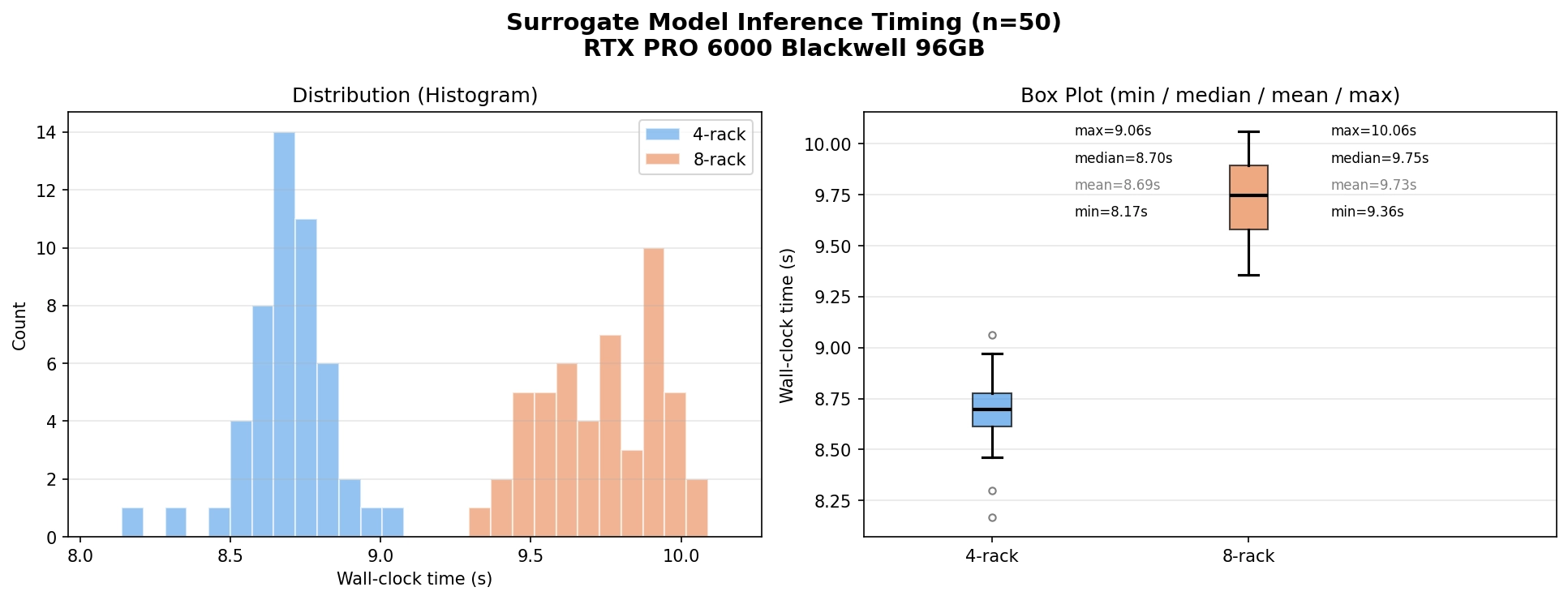

We trained a 3D UNet surrogate following the PhysicsNeMo CFD datacenter example and the Datacenter CFD Dataset (approximately 400 OpenFOAM steady-state simulations, 35-55 racks), then converted the inference output to VTK and overlaid it on Agaruda's container 3D scene in NVIDIA Omniverse. High-quality CFD datasets are expensive and hard to obtain, so NVIDIA and Wistron's open release of this data and pipeline is a rare public resource in the DC surrogate space.

The dataset and example still come with a structural limitation: the model input only accepts geometry (SDF), while supply-air temperature, supply-air flow, rack power, and other operating conditions are all fixed inside the boundary condition, so the model cannot reflect thermal changes under varying operating conditions. We therefore positioned this experiment as a pipeline feasibility check, comparing the thermal fields of 4-rack and 8-rack configurations under the same container geometry. Training time was limited and absolute accuracy did not reach our target, but the thermal-field trend as rack density increases is clearly captured.

Figure 8: Surrogate inference for the 4-rack configuration overlaid on Agaruda's container 3D model. Airflow is dominated by green and yellow, corresponding to a lower thermal load. Source: Agaruda, PhysicsNeMo inference rendered in NVIDIA Omniverse.

Figure 9: Surrogate inference for the 8-rack configuration. With the rack count doubled, airflow shifts markedly toward red, reflecting the physical trend of thermal load rising with density. Source: Agaruda, PhysicsNeMo inference rendered in NVIDIA Omniverse.

The visual rendering is comparable to the Cadence CFD simulation in Figure 2, but a single inference drops from hours to seconds, a speedup of several orders of magnitude. The model also correctly captures how increased rack density drives thermal-field changes.

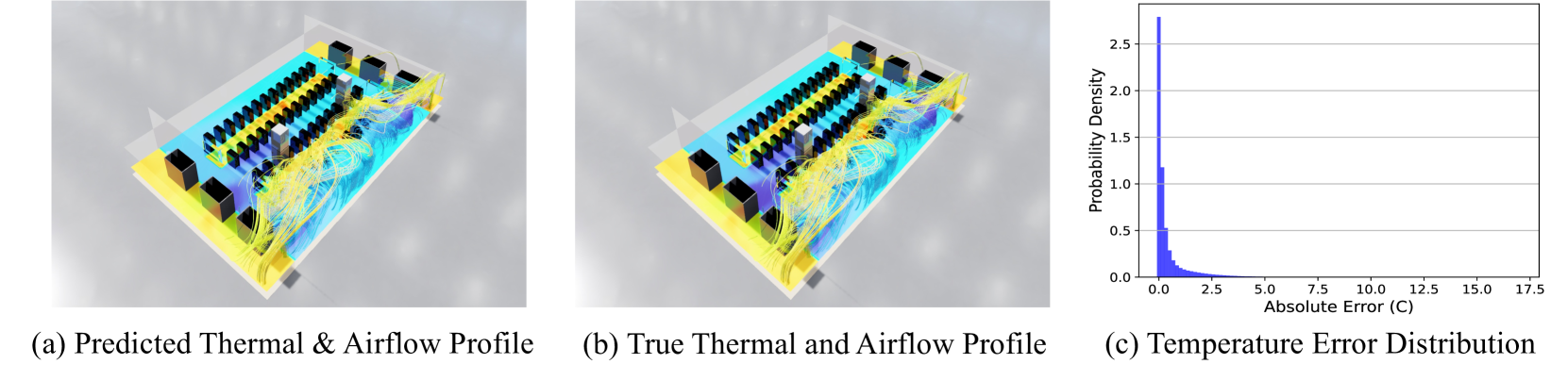

The current dataset only includes geometry as input. Our next step at Agaruda is to build our own CFD dataset covering supply-air temperature, supply-air flow, rack power, and other operating conditions, then retrain the surrogate so it can directly reflect the impact of operational adjustments on the thermal field. The parameterized surrogate architectures in NVIDIA (2025) and Sarkar et al. (2024) are important references for this direction.

Figure 10: Predictions from the parameterized surrogate in NVIDIA's PhyAI paper (right) compared with CFD ground truth (left), offering a reference point for our next phase. Source: Transforming Future DC Operations via Physical AI.

7. Next step: surrogate + RL for DC cooling optimization

Surrogate models solve the speed bottleneck of physics prediction, but the real problem in data centers comes next: continuously driving energy consumption down is the core operational goal.

One viable path is to use the surrogate as an environment model and let an agent safely trial cooling policies inside the digital twin. This path has accumulated industrial validation: Meta (2024) cut DC fan energy by 20% with simulator-based RL; Phaidra (2026) reduced thermal-peak overshoot by 75-80% on an NVIDIA DGX SuperPOD liquid-cooled deployment; Wu et al. (2026) used a CNN-LSTM-Transformer surrogate as the RL environment and achieved an 11.68% energy reduction at the CINECA supercomputing center. Integrating a parameterized surrogate with an RL agent into the DC cooling control loop along this direction is Agaruda's next engineering priority.