05.11.2026

Cinta × NemoClaw: A Technical Deep Dive

What you will learn

How a NemoClaw-centric Agent execution architecture enables compliance automation for the AI Factory.

01 — CONTEXT

Cinta Platform is the AI Factory design compliance platform built by the AGARUDA team. It integrates the Omniverse DSX Blueprint [1] reference framework and uses AI Agents to turn project assets into traceable compliance analysis and reports. This article focuses on how NemoClaw enables AI Agents to run compliance checks autonomously inside secure sandboxes.

02 — AI AGENT

How Cinta Agents change the compliance workflow

A typical Agent framework works like this: the user asks a question, the Agent answers, then the session ends. Next time, the Agent has no memory of what came before.

Cinta's Agents work differently. They run inside OpenShell [7] sandboxes provisioned by NemoClaw [4], and they keep working even when no one is interacting with them:

- Always on: The Agent runs continuously and reacts to events, kicking off processing the moment a change lands.

- Proactive notifications: No one needs to ask "is there anything new today?" The Agent continuously monitors design and standards-related changes on its own, and reports issues to the right people when needed.

- Cross-session memory: Issues found on each IT Module, items that have been granted exemptions, accumulated patterns: they remain available when you come back. This knowledge lives in workspace memory and doesn't disappear when a session ends.

A Cinta Agent behaves more like a long-tenured on-site compliance consultant than a button-press chatbot.

When a BOM changes, the pipeline runs automatically

When an engineer uploads a new BOM in Cinta (or swaps a server from B200 to B300), the Agent detects the change within seconds, quickly re-evaluates compliance impact, generates a report, and notifies the relevant stakeholders. High-risk items are routed for human approval; low-risk items are handled end-to-end.

Past experience is preserved

The Agent remembers what was scanned on each IT Module, what issues came up, and how they were resolved. The next time you encounter a similar configuration, it can tell you directly: "This IT Module had a cooling compatibility issue last time; the resolution was..." The more you use it, the sharper the recommendations become.

It tells you before you ask

A new revision of a compliance verification document? An EOL notice or a new model from a supplier? The Agent tracks these changes itself, evaluates the impact on your existing design, and pushes the result to you. You don't have to remember to go look.

High-stakes decisions always involve humans

The Agent doesn't make major decisions on its own. When it encounters a high-risk finding, it packages an action plan and routes it to a manager for approval. If a similar deviation has been waived in the past, the Agent surfaces the prior approval rationale and conditions so you have context for the decision.

Every step is traceable

What the Agent decided, what data it read, which rule it applied, why it chose one option over another: all of it is recorded. At audit time, no one has to reconstruct "why we made that decision." Just open the decision trail.

03 — ENTERPRISE

Why NemoClaw

OpenClaw [9] is already a fairly complete agent runtime: sessions, memory, scheduling, hooks, sub-agents. It's a fine fit for personal tools or prototypes.

But once you want an AI Agent to handle compliance data and make automated judgments, a runtime alone isn't enough. You need to be sure the Agent can only access what it's supposed to access, that the LLM won't leak sensitive information, and that the inference API key is never visible from inside the sandbox.

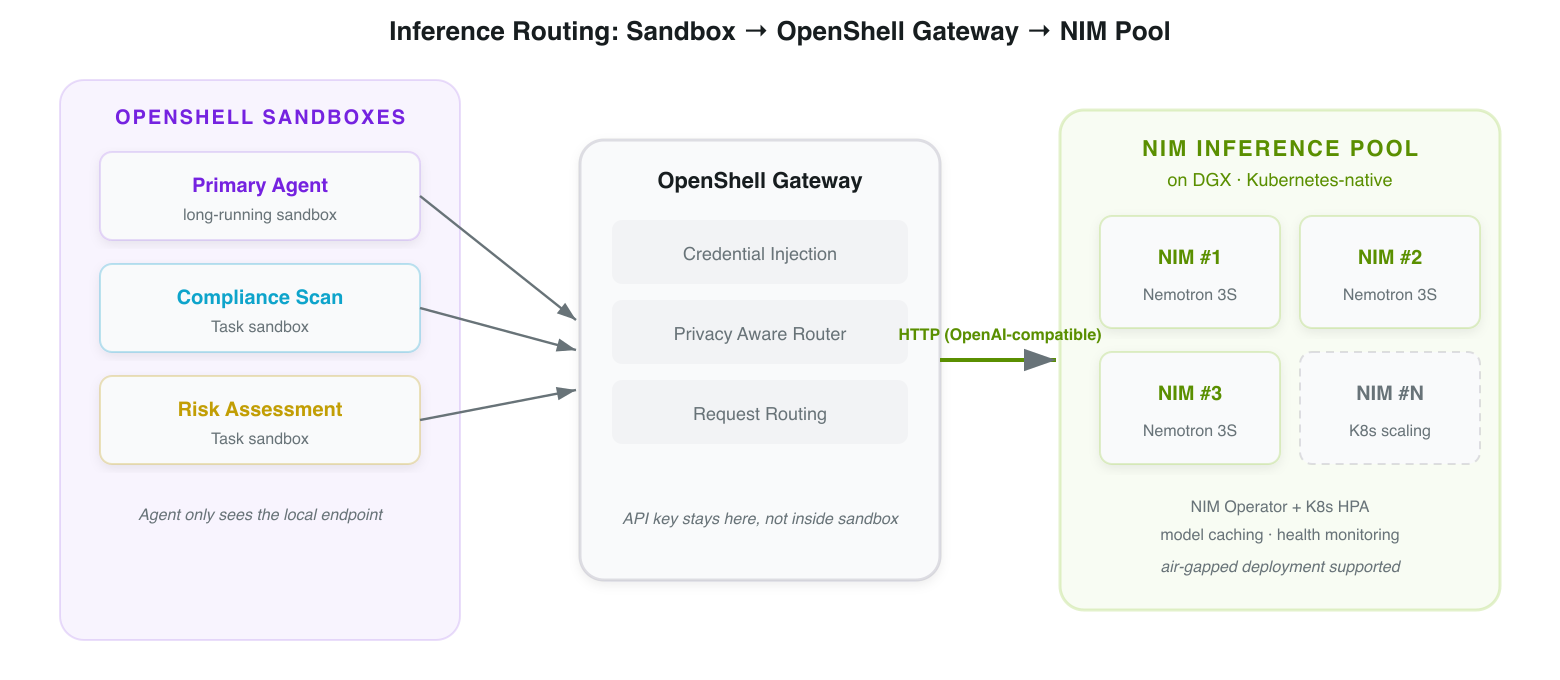

This is where NemoClaw and OpenShell divide responsibilities. OpenShell provides the sandbox infrastructure: container isolation, network policy, credential gateway. NemoClaw is the reference stack built on top of OpenShell, handling onboarding, blueprint configuration, inference routing, and state management. OpenClaw agents run inside it. NemoClaw assembles these pieces, defines the blueprint, and manages onboarding and inference routing.

Table 1. OpenClaw vs. NemoClaw + OpenShell capability comparison

| Capability | OpenClaw | NemoClaw + OpenShell as practiced in Cinta |

|---|---|---|

| Agent Runtime | session, memory, cron, hooks, sub-agents | Inherits the full set of OpenClaw capabilities. Cinta uses hooks for real-time response to BOM changes, and sub-agents (sessions_spawn) for parallel lightweight tasks inside the sandbox. Heavyweight tasks that need stronger isolation are run by the Cinta Platform Service, which spins up dedicated Task sandboxes on the host. |

| Execution isolation | Relies on host OS-level controls | OpenShell sandbox: at the sandbox layer, file system access scope and network connection allowlists are restricted. At the application layer, data authorization (which Agent can read which data) is controlled by the Cinta Platform API. The two layers maintain clear separation of responsibilities: OpenShell governs isolation boundaries, Cinta governs business permissions. |

| LLM output safety | Custom implementation required | Cinta integrates NeMo Guardrails [13], using configurable input/output rails to mask PII such as facility addresses and contact information, and to constrain the Agent's response scope and compliance-judgment logic. |

| Inference credential management | API key inside the agent's environment | Inference Routing: Nemotron 3 Super is deployed on the customer's own DGX. Agents route to the NIM endpoint via the OpenShell gateway, and the API key stays on the host side, never entering the sandbox. |

| Network policy | No built-in controls | OpenShell Network Policy: Cinta Agents perform inference inside the sandbox via inference.local, which the OpenShell gateway forwards to NIM on the host side. The Agent never connects directly to the NIM endpoint and has no access to the external network, ensuring compliance data stays inside. |

| Sandbox lifecycle | No notion of sandboxes | OpenShell Sandbox Lifecycle: Cinta splits work along two paths. Lightweight tasks run in parallel inside the sandbox via OpenClaw sub-agents driven by the Primary Agent. Heavyweight tasks are run by the Cinta Platform Service, which creates a dedicated Task sandbox (with its own Task Agent) on the host and tears it down when done. The orchestration pattern is Cinta's; the sandbox lifecycle is executed by OpenShell. |

| Audit traceability | Session transcript | Workspace memory + external durable layer: every finding, approval decision, and exemption authorization in Cinta is recorded both in workspace memory (for the Agent's working reference) and in an external audit event store (for compliance auditing). The system of record is the external system, not the sandbox state. |

Inference model and deployment

Cinta Agents use Nemotron 3 Super [10] for compliance judgment, deployed via NIM [12] on the enterprise's own DGX / Kubernetes environment, with support for air-gapped deployment. Inside the sandbox, the Agent reaches inference through inference.local, which the OpenShell gateway forwards to NIM on the host side [5]; credentials never enter the sandbox.

04 — ARCHITECTURE

System architecture

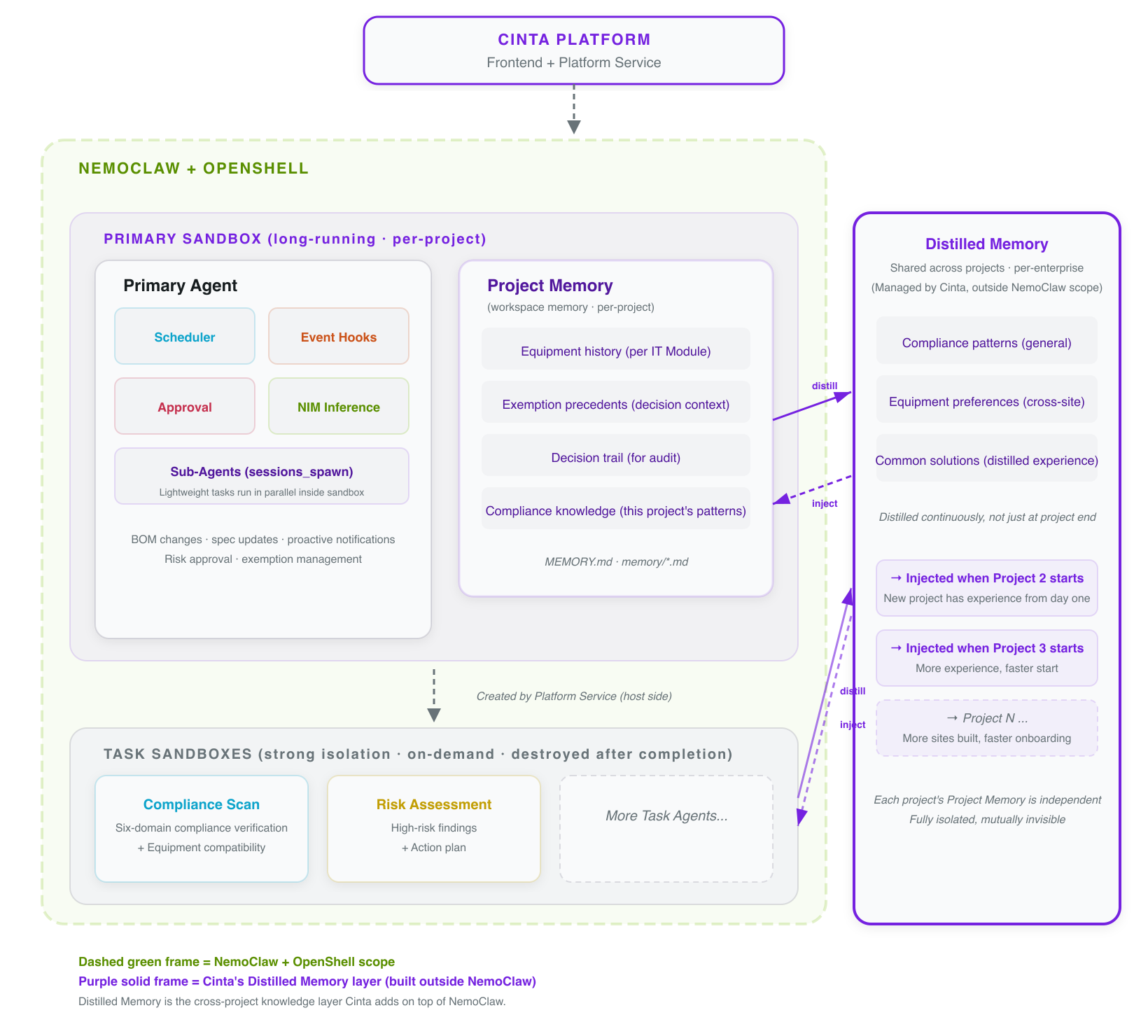

Cinta's orchestration breaks each construction project into a set of sandboxes. A long-running Primary Agent handles scheduling, event listening, and Project Memory management. Task execution follows two paths: lightweight tasks (such as standards comparison or summary generation) are handled in parallel inside the sandbox by the Primary Agent using OpenClaw sub-agents (sessions_spawn). Heavyweight tasks that require stronger isolation (such as full compliance scans or risk assessments) are run by the Cinta Platform Service, which creates a dedicated Task sandbox on the host with its own Task Agent and tears it down when finished. Across projects, Cinta maintains a shared Distilled Memory.

Figure 1. Cinta X NemoClaw system architecture: Sandbox + Project Memory inside the NemoClaw boundary, alongside Cinta's own cross-project Distilled Memory.

The Primary Agent runs continuously inside a long-lived OpenShell sandbox, and Project Memory (workspace memory) is preserved for the lifetime of that sandbox. Systems of record are written to an external durable layer in parallel, so even if the sandbox is destroyed, nothing is lost. Lightweight tasks are handled by the Primary Agent using sub-agents inside the sandbox. Heavyweight tasks are run by the Cinta Platform Service in a dedicated host-side Task sandbox (with its own Task Agent), which is torn down when complete. Throughout a project, cross-project generalizable experience is continuously distilled into Distilled Memory, becoming a reusable knowledge asset across sites.

Figure 2. Inference Routing: Sandbox → OpenShell Gateway → NIM (Nemotron 3 Super).

NIM can be deployed on a DGX inside the same enterprise local network as OpenShell, so compliance data never has to leave the enterprise network.

Two memory layers: Project Memory and Distilled Memory

You may be building three data centers at once. Each site has its own design, its own compliance issues, its own equipment configuration. But a lot of experience is generalizable: a lesson learned on the first site shouldn't have to be rediscovered on the second. Cinta uses a layered memory design to handle this:

Durable layer and isolation

Workspace memory is the Agent's working memory, but in an enterprise environment you can't keep systems of record only inside the sandbox. Cinta places durable state in an external layer and keeps only working context inside the sandbox:

External durable layer

All systems of record (findings, approval decisions, exemption authorizations, decision trails) are written to external systems. Compliance reports and BOM snapshots live in object storage; audit events flow into an append-only event store. Workspace memory is the Agent's working context, not the system of record.

The Agent reads context from workspace memory when making judgments, but the systems of record always come from the external durable layer.

Isolation model

Each construction project has its own Primary + Task sandboxes, each with its own policy, storage namespace, inference route, and audit trail. Cross-project generalizable experience can be distilled and reused, while each project's execution environment retains an independent boundary.

Your experience accumulates across projects; each project's sandbox and Project Memory remain fully independent.

Audit and compliance records

The Agent's decision trail is written to two places in parallel: workspace memory (for the Agent's reference next time) and an external audit event store (for compliance auditing). The external records are immutable and survive sandbox teardown. Compliance audits look only at the external system, not at sandbox state.

What the auditor sees is always the immutable external record, not the Agent's working notes.

Approval state management

The Agent doesn't sit blocked while waiting for approval. It writes the approval request to the external durable layer and ends the session. Once a manager approves, the Platform API triggers a new session through a webhook to continue processing. A dropped connection isn't a problem at all, because no session is waiting — all the state is in the external system.

This design lets approvals span hours or days without worrying about connection interruptions.

05 — NOTES

Notes

- NemoClaw / OpenShell maturity: As of May 2026, NVIDIA's NemoClaw architecture reference [4] labels NemoClaw as alpha software with the note "APIs and behavior may change without notice. Do not use in production." OpenShell's public docs [7] do not carry an explicit maturity label. The architecture in this post reflects the current APIs and behaviors and may change as those evolve.

06 — CONCLUSION

Summary

This article walked through the NemoClaw-centric Agent execution architecture behind Cinta (OpenShell provides the sandbox, OpenClaw provides the agent runtime, NemoClaw assembles and configures), the design of inference routing, the layered memory design (Project Memory + Distilled Memory), and the external durable layer and isolation model.

This architecture is more than a technology choice. It's the foundation that lets AI Agents become trustworthy and usable in AI Factory contexts.

REFERENCES

References

Omniverse / DSX Blueprint

- NVIDIA Omniverse DSX Blueprint Documentation

- Omniverse DSX Blueprint Blog (NVIDIA)

- Vera Rubin DSX AI Factory Reference Design Announcement

NemoClaw / OpenShell / OpenClaw

- NVIDIA NemoClaw Architecture Reference

- NemoClaw Inference Options: Local NIM & Provider Routing

- NemoClaw Workspace Files Reference

- How OpenShell Works — Components & Request Flow

- Run Autonomous Agents More Safely with NVIDIA OpenShell (Technical Blog)

- OpenClaw Documentation